Training Prompt-only Steering Vectors in a Principled Manner

our recent work on prompt-only SV and SV training dynamics.

In this paper, we propose a principled training framework for steering vectors (SVs) and introduce Prompt-Only Steering Vector (PrOSV). Several readers (advisor, fellow students, reviewers) have complained that theoretical derivation on SV training dynamics is too dense and hard to read. However, I hesitate to put informal interpretations into a research paper. This post is meant to contain such informal but intuitive stuff aiming to help readers understand what we were trying to state through definitions and equations.

TL;DR

- Stochastic gradient descent is not the panacea to all optimization problems.

- Neural network scaling theory is a useful theoretical tool to predict training dynamics.

- Rank-1 prompt-only intervention is sufficient for concept-based steering.

Introduction

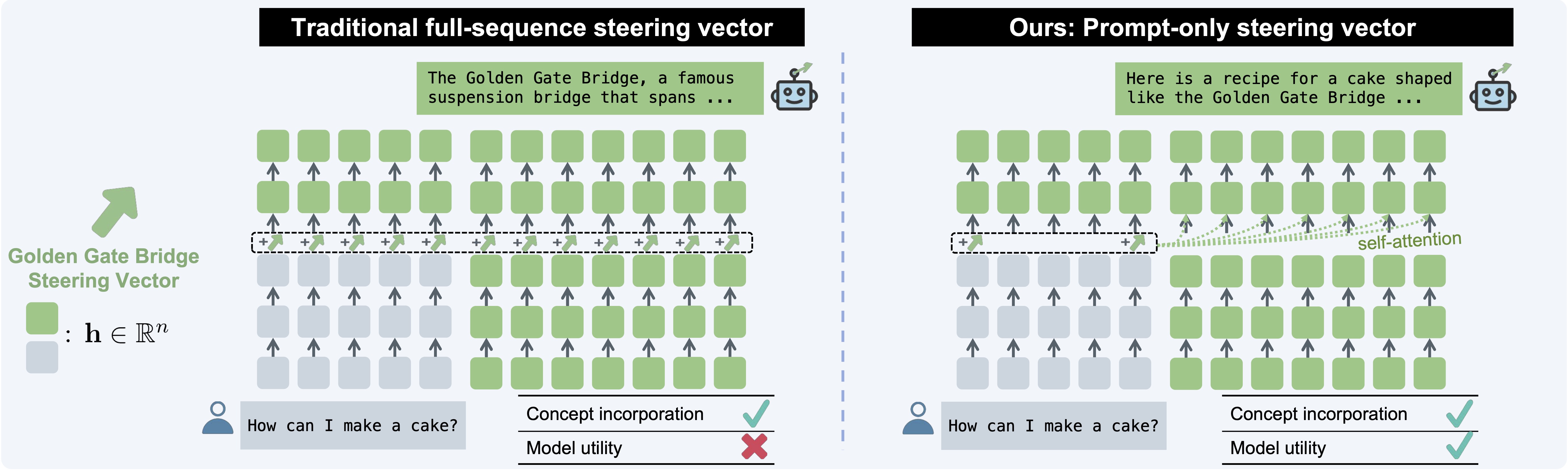

The SV approach achieves model control by adding a fixed vector to model representations. As is shown in Figure 1, most prior works apply SVs at each token position and achieves concept-based steering effectively. However, we find that the steered model performance drops severely. We attribute this problem to the full-sequence nature of such interventions: full-sequence SVs (FSSVs) might exert too much interference in the generation process and thus harms general capabilities.

Therefore, we hypothesize that we can better preserve model capabilities by limiting the amount of interventions. One possible approach is to limit the number of intervened tokens and apply prompt-only interventions. Beyond minimal interference, this approach has benefits in terms of computational cost since it restricts intervention to prefill stage such that intervention overhead does not scale with context length.

Prior work has shown the feasibility of the prompt-only approach to model control, including prefix tuningp4 is intervention on 4 prefix tokens, s2 is interventions on 2 suffix tokens while p4+s2 simultaneously intervenes on prefix and suffix locations. Since we focus on training-based methods, we do not build directly upon function vectors and task vectors but study s1 interventions as part of our location search process. Prefix tuning is essentially the soft prompt approach that expands the context rather than modifying representations in place, therefore we also leave it out of discussions.

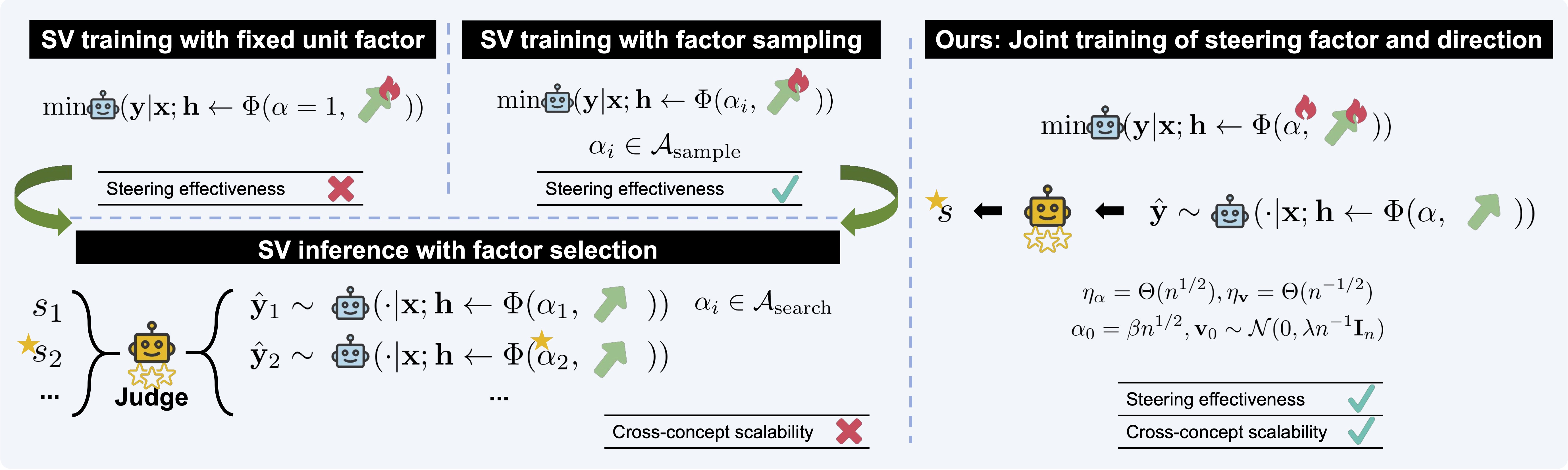

Joint training of steering factors and directions does not work out of the box

The motivation for using prompt-only SV is quite straightforward, but it is not the case with special designs in SV training. As is shown in Figure 2, current SV-based approaches always require a factor selection process after SV training, where steered responses are sampled using factors from a predesignated search grid. The final factor is chosen based on the quality of steered responses. In AxBench, the evaluation metric is overall steering score, which is the harmonic mean of concept score (how well the concept is incorporated into a response), instruct score (how well a response is related to the instruction) and fluency score (how fluent a response is). In AxBench evaluation, the common setup is to sample 10 responses each from a search grid of 14 factors for each concept–140 responses in total. This can be costly when there are many concepts to steer. Instead, it would be convenient to put trained SVs to use immediately after training.

This calls for joint training of steering factors and directions for both FSSVs and PrOSVs. This approach is useful for end-to-end usage of SVs, saves the factor selection process and improves the scalability of SVs over large sets of concepts. However, one might question that it is trivial to implement joint training and that the stochastic gradient descent algorithm would take care of the rest. However, we found that is not the case: when we fix the steering factor to $\alpha=1$ and only train the steering direction, the resulting SV is totally unusable.

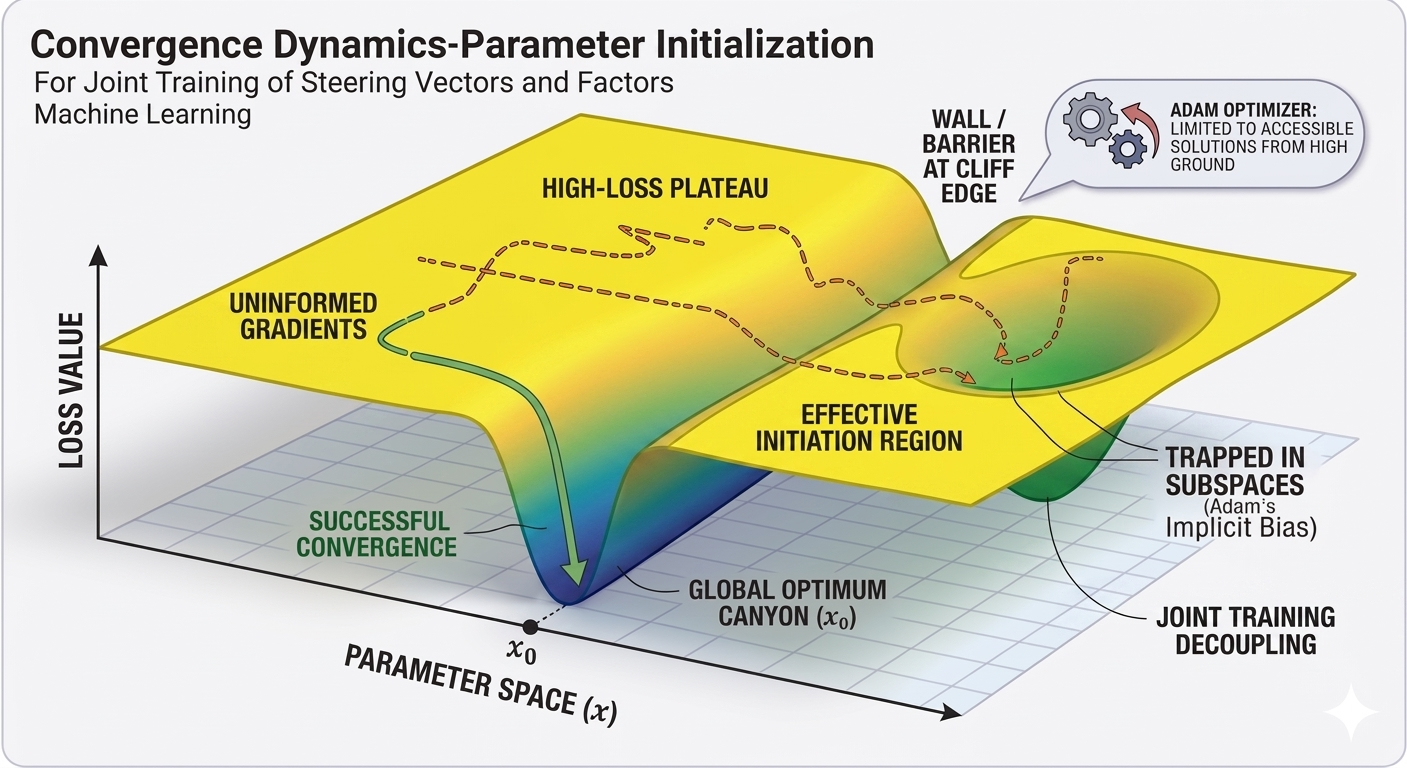

We hypothesize that this is due to the limited expressiveness of the SV. We focus on the simplest form of SV, where a fixed vector is added to representations $\mathbf{h} \in \mathbb{R}^n$: $\Phi(\mathbf{h}; \alpha, \mathbf{v}) = \mathbf{h} + \alpha \mathbf{v}$, where $\mathbf{v}$ is the steering direction and $\alpha$ is the steering factor. The functional form above is simply an input-agnostic, additive rank-1 bias; therefore, it cannot model inter-token interactions. The SV approach $\Phi: \mathbb{R} \times \mathbb{R}^n \to \mathbb{R}^n$ is also fundamentally less expressive than ReFT-like approaches $\Phi: \mathbb{R}^n \times \mathbb{R}^n \to \mathbb{R}^n$, e.g., $\Phi(\mathbf{h}; \mathbf{w}, \mathbf{v}) = \mathbf{h} + \mathbf{v} \mathbf{w}^\top \mathbf{h}$. As a result, the expressiveness of ReFT makes it less sensitive to hyperparameter configurations, since its functional form allows it to compensate for suboptimal initializations. In contrast, SV requires careful selection of learning rates and initialization strategies.

Furthermore, the expressiveness bottleneck of SV is compounded by the implicit bias of the optimizer, which limits the set of reachable solutions from a certain initialization point. The explanations above might be intuitively (but less rigorously) illustrated in Figure 3.

If you found this useful, please cite this as:

Bao, Yuntai (May 2026). Training Prompt-only Steering Vectors in a Principled Manner. colored-dye’s blog. https://colored-dye.github.io.

or as a BibTeX entry:

@misc{bao2026training,

title = {Training Prompt-only Steering Vectors in a Principled Manner},

author = {Bao, Yuntai},

note = {Blog post},

year = {2026},

month = {May},

url = {https://colored-dye.github.io/blog/2026/prompt-only-sv/}

}