A Personal Review of *PO Algorithms

a review of Policy Optimization or Preference Optimization algorithms.

In this blog post, I provide an informal review of *PO (i.e. Policy Optimization or Preference Optimization) algorithms, especially including Group Relative Policy Optimization (GRPO)

Roadmap.

- The classic RL algorithm, Proximal Policy Optimization (PPO);

- GRPO, which obviates the need for a value model as in PPO.

- DPO, which directly optimizes on preference pairs and removes the need for a reward model;

PPO

PPO is an actor-critic algorithm widely used for RL fine-tuning of LLMs

where $\pi_\theta$ and $\pi_{\theta_\text{old}}$ are current and old policy models, respectively; $q$ is question sampled from the question distribution and $o$ is outputs sampled from $\pi_{\theta_\text{old}}$; $\epsilon$ is clipping hyperparameter; $A_t$ is advantage that is computed via Generalized Advantage Estimation (GAE) based on rewards ${ r_{\geq t} }$ and learned value model $V_\psi$, where the value model is trained together with the policy model.

The standard approach to obtain rewards is to add per-token KL penalty from a reference model:

\begin{equation}\label{eq:ppo_reward} r_t = r_\phi(q, o_{\leq t}) - \beta \log \frac{\pi_{\theta}(o_t \vert q, o_{\lt t})}{\pi_{\theta_{\text{old}}}(o_t \vert q, o_{\lt t})}, \end{equation}

where $r_\phi$ is reward model, $\phi_\text{ref}$ is the reference model (usually the initial policy) and $\beta$ controls the strength of KL penalty.

GAE

GRPO

PPO requires a separate value model that is usually the same size as the policy model, which brings substantial memory and computational costs. Additionally, the value function is the baseline in advantage computation; however, there is a mismatch between the value model and reward model: the value model is token-wise accurate whereas the reward model only assigns reward for the last token. Both concerns motivates GRPO, which removes the need for a token-wise value model.

As is indicated by its name, GRPO samples a group of outputs from the old policy $\pi_{\theta_{\text{old}}}$: ${ o_1, o_2, \dots, o_G }$ where $G$ is group size. Then the average reward of the group is the baseline.

\[\mathcal{J}_{\text{GRPO}}(\theta) = \underset{\substack{q \sim P(Q), \\ \{ o_i \}_{i=1}^{G} \sim \pi_{\theta_{\text{old}}}(O \vert q)}}{\mathbb{E}} \left[ \frac{1}{G} \sum_{i=1}^{G} \frac{1}{\vert o_i \vert } \sum_{t=1}^{\vert o_i \vert} \min \left[ \frac{\pi_{\theta}(o_{i,t} \vert q, o_{i,\lt t})}{\pi_{\theta_{\text{old}}}(o_{i,t} \vert q, o_{i,\lt t})} \hat{A}_{i,t}, \mathrm{clip}\left( \frac{\pi_{\theta}(o_{i,t} \vert q, o_{i,\lt t})}{\pi_{\theta_{\text{old}}}(o_{i,t} \vert q, o_{i,\lt t})}, 1-\epsilon, 1+\epsilon \right) \hat{A}_{i,t} \right] - \beta \mathbb{D}_{\text{KL}} \left( \pi_\theta \Vert \pi_{\text{ref}} \right) \right],\]where $\hat{A}_{i,t}$ is the advantage computed based on relative rewards of each group. Additionally, different from the KL penalty term of Equation \eqref{eq:ppo_reward}, GRPO estimates KL divergence with the following unbiased estimator:

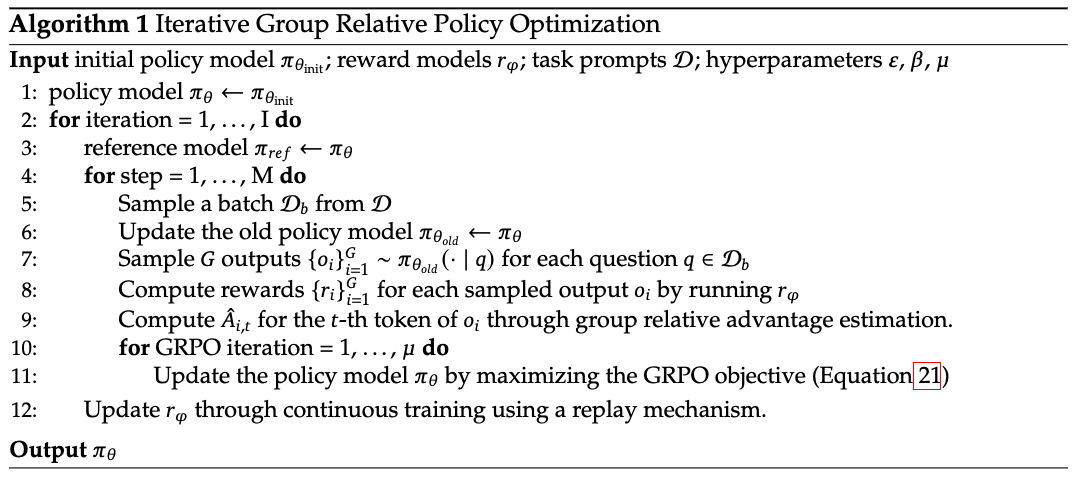

\[\mathbb{D}_{\text{KL}} \left(\pi_\theta \Vert \pi_\text{ref} \right) = \frac{\pi_{\theta_{\text{ref}}}(o_{i,t} \vert q, o_{i,\lt t})}{\pi_{\theta}(o_{i,t} \vert q, o_{i,\lt t})} - \log \frac{\pi_{\theta_{\text{ref}}}(o_{i,t} \vert q, o_{i,\lt t})}{\pi_{\theta}(o_{i,t} \vert q, o_{i,\lt t})} - 1.\]The algorithm for iterative GRPO (Figure 1) shows how reference model and old policy model are iteratively updated. The old policy model is frequently updated for each sampled batch while the reference model is updated at larger intervals.

The advantage $\hat{A}_{i,t}$ is computed via outcome supervision and process supervision. For outcome supervision, given a group of rewards $\mathbf{r} = \{ r_1, r_2, \dots, r_G \}$, the rewards are normalized within the group, such that the normalized reward is $\tilde{r}_i = \frac{r_i - \mathrm{mean}(\mathbf{r})}{\mathrm{std}(\mathbf{r})}$. The normalized reward is then assigned as the actual advantage $\hat{A}_{i,t} = \tilde{r}_i$.

For process supervision, rewards are assigned to each step of outputs: $\mathbf{R} = \left\{ \left\{ r_1^{\text{index}(1)}, \dots, r_1^{\text{index}(K_1)} \right\}, \dots, \left\{ r_G^{\text{index}(1)}, \dots, r_G^{\text{index}(K_G)} \right\} \right\}$, where $\text{index}(j)$ is the ending token index of the $j$-th step and $K_j$ is the total number of steps in the $j$-th output. The rewards are normalized via: $\tilde{r}_i^{\text{index}(j)} = \frac{r_i^{\text{index}(j)} - \mathrm{mean}(\mathbf{R})}{\mathrm{std}(\mathbf{R})}$. The advantage of each output token is thus the sum of normalized rewards from the current step and all subsequent steps: $\hat{A}_{i,t} = \sum_{\text{index}(j)\geq t} \tilde{r}_i^{\text{index}(j)}$.

DAPO

GSPO

DPO

Direct Preference Optimization (DPO)

DPO directly minimizes the following loss function over a dataset of preference triplets, $\mathcal{D} = \{ (x, y_l, y_w) \}$, which are prompt, rejected response and chosen response, respectively:

\[\mathcal{L}(\pi_\theta, \pi_{\text{ref}}) = - \underset{(x, y_l, y_w) \sim \mathcal{D}}{\mathbb{E}} \left[ \log \left( \sigma \left( \beta \left( \log \frac{\pi_\theta(y_w \vert x)}{\pi_\text{ref}(y_w \vert x)} - \log \frac{\pi_\theta(y_l \vert x)}{\pi_\text{ref}(y_l \vert x)} \right) \right) \right) \right],\]where $\beta$ is the coefficient that controls the strength of reference model constraint. The reward is $\hat{r}_\theta(x,y) = \beta \log \frac{\pi_\theta(y \vert x)}{\pi_\text{ref}(y \vert x)}$, which is implicitly defined by both the policy model and the reference model.

Summary

| Method | Token/sequence -level | Online/offline |

|---|---|---|

| PPO | Token | Online |

| GRPO | Token | Online |

| DAPO | Token | Online |

| GSPO | Sequence | Online |

| DPO | Token | Offline |

If you found this useful, please cite this as:

Bao, Yuntai (Feb 2026). A Personal Review of *PO Algorithms. colored-dye’s blog. https://colored-dye.github.io.

or as a BibTeX entry:

@misc{bao2026a,

title = {A Personal Review of *PO Algorithms},

author = {Bao, Yuntai},

note = {Blog post},

year = {2026},

month = {Feb},

url = {https://colored-dye.github.io/blog/2026/grpo/}

}