Concept Distributed Alignment Search for Faithful Representation Steering

discussions regarding our recent work on faithful representation steering.

In this blog post, I would like to extend upon our recent work, Faithful Bi-Directional Model Steering via Distribution Matching and Distributed Interchange Interventions, especially regarding the conceptual nature of our method, Concept Distributed Alignment Search (CDAS).

A sober look beyond mech interp: CDAS as self-distillation from context

A number of recent papers have studied the topic of on-policy self-distillation

This topic is particularly intriguing for its bootstrapping nature: instead of using an external, domain-specific teacher, a simple piece of task-specific context is sufficient for the policy model itself to serve as a competent self-teacher. The self-distillation loop allows for continual self-improvement, until the process hits some ceiling that is possible bound by the model’s pretraining knowledge capacity or reasoning capabilities.

On hindsight, I find that our representation steering method could be alternatively positioned as context distillation: the concept-specific steering instruction is distilled into the steering vector via a distribution-matching objective–except that we use JSD loss rather than reverse KL loss. This resonates with previous findings of general knowledge distillation where generalized JSD sometimes outperforms reverse KL

This perspective connects our findings from the findings of recent works on self-distillation and context distillation. In general, self-distillation is found to facilitate continual learning

Early exploration and misconception–theoretical discussions

In early 2025, I was deeply intrigued by the causal abstraction branch of mechanistic interpretability and was working on improving Distributed Alignment Search (DAS) New York is in the country of, multiple responses could be considered factually correct: the U.S., the United States, America. By setting the answer to be strictly U.S. under greedy decoding might deviate from the model’s inherent tendencies since the model might prefer a different but semantically similar answer. By explicitly incorporating probabilities in the training objective of causal abstraction methods, we might be able to utilize the curated constant labels in a manner that is more faithful to the model of interest, without sampling labels from the target model and filtering for useful ones in a model-specific manner.

We initially submitted the paper to NeurIPS 2025. However, our discussions with the reviewers made us aware of a fundamental mistake regarding the conceptual nature of our method: CDAS should be positioned as a steering method, not a causal variable localization method. More specifically, CDAS is dedicated for a subset of causal variables: those directly related to outputs or properties of outputs, e.g., output tokens and output-oriented concepts. These variables are usually leaf nodes of causal graphs or single parents of leaf nodes (e.g., Y, Z when the causal graph is a linear chain X -> Y -> Z or Y, Z when the graph is X1 -> Y, X2 -> Y, Y -> Z). The practical implication is that CDAS fails to accomplish general-purpose causal abstraction like DAS.

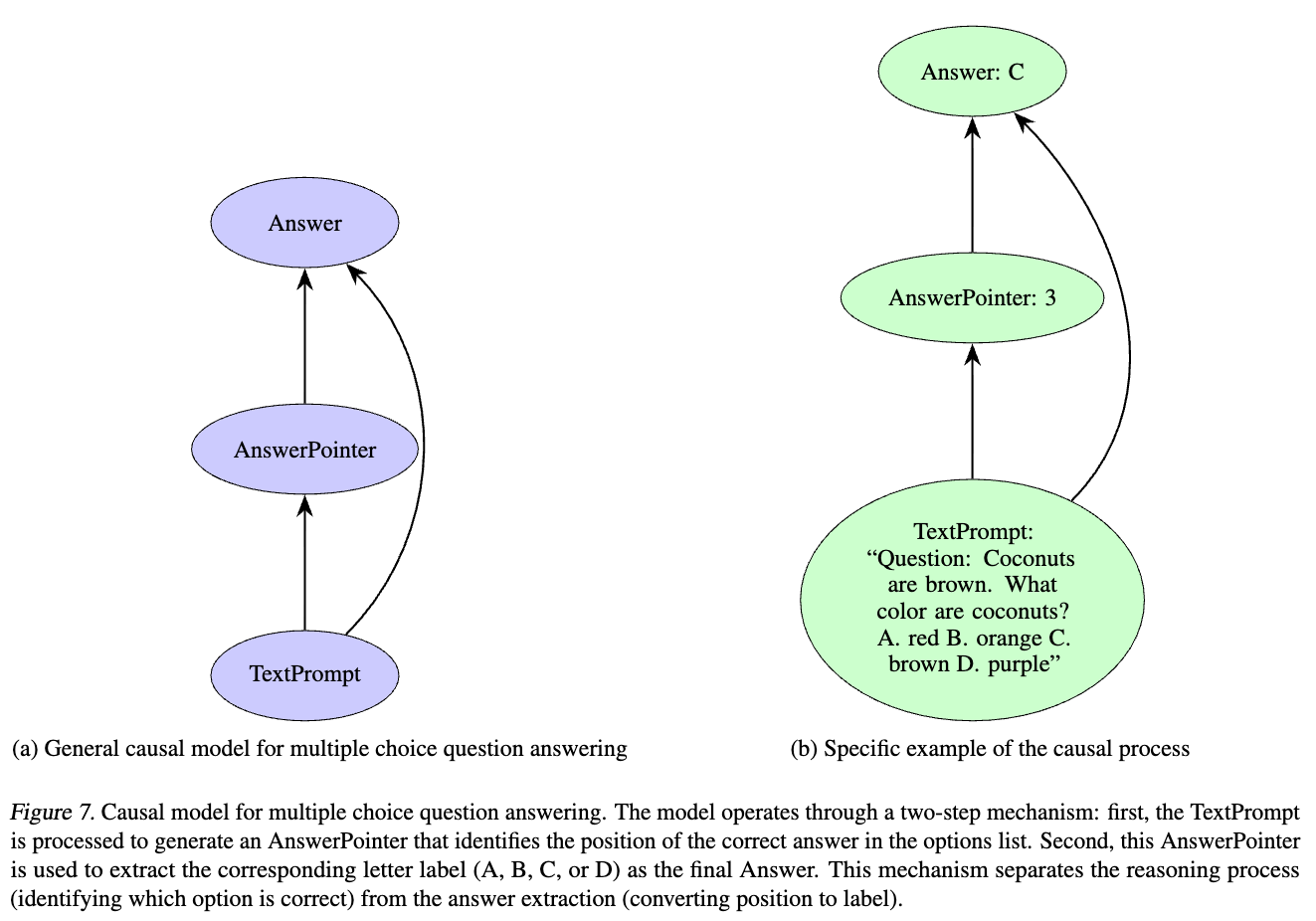

We use the case of multiple-choice task to help readers understand. The high-level causal model of multiple-choice tasks, $\mathcal{H}$ (shown in Figure 1) defines two important causal variables: $X_\text{Order}$ (position of the answer) and $O_\text{Answer}$ (answer token). According to Mueller et al.

Let base inputs be $b$, a question prompt with choices A, B, C, D, and the correct choice letter is $y^b = \verb|C|$, then the choice index is 2. Let counterfactual inputs be $c$ with choices E, F, G, H and the correct choice letter is $y^c = \verb|F|$, then the choice index is 1. $b,c$ are essentially the same question, except that $c$ shuffles the order of choices and replaces choice letters. After interchange intervention on the $X_\text{Order}$ variable, the intervened output has the same choice index 1 as when inputs are $c$. Therefore intervening on base inputs $b$ yields an intervened counterfactual answer: $y^{b*}=\verb|B|$.

Recall that positive term of the CDAS training objective is as follows:

\[D_{\Phi}^+ = \frac{1}{\vert y^{b*} \vert} \sum_{k=1}^{\vert y^{b*} \vert} D_{\mathrm{JS}}\left( \mathbf{p}_{\Phi} \left( \cdot \vert y^{b*}_{\lt k}, b; \mathbf{h} \leftarrow \Phi^{\mathrm{DII}}(c) \right) \big\| \mathbf{p} \left( \cdot \vert y^{b*} _{\lt k},c \right) \right),\]where $D_{\mathrm{JS}}(\cdot \Vert \cdot)$ is Jensen-Shannon divergence.

The problem is that, when conditioned with counterfactual inputs $c$, the un-intervened probabilities on intervened counterfactual labels $y^{b*}$, i.e. $p(y^{b*} \vert c)$, is low since $y^{b*} \neq y^c$. As a result, the intervened counterfactual label does not provide sufficient signal to optimize for alignment and the resulting intervention does not correspond to features of the target causal variable. The cause of this problem is that this intervened counterfactual label is the composite of answer index and input prompt and it is not even a plausible answer given counterfactual inputs. In contrast, DAS does not suffer from this problem since the loss signal comes from constant external labels, not model-induced probability distributions.

Acknowledging this problem, we treat the CDAS method as identifying features for output-oriented concepts that directly informs concept-based steering. To make this point clear, we also mention that CDAS is not a general-purpose causal abstraction method in the main body of our paper:

Remark (CDAS is not causal variable localization). While CDAS draws inspiration from DAS, it should not be viewed as a causal variable localization method: DAS assumes access to a high-level algorithm with near-perfect supervision; whereas our goal is not to identify ground-truth causal variables, but to find useful features that enable faithful steering. Thus, CDAS is best understood as a steering method motivated by causal variable localization principles.

CDAS for causal abstraction?–an empirical analysis

Benchmark dataset and metric. I tested CDAS on the causal variable localization track of Mechanistic Interpretability Benchmark (MIB)

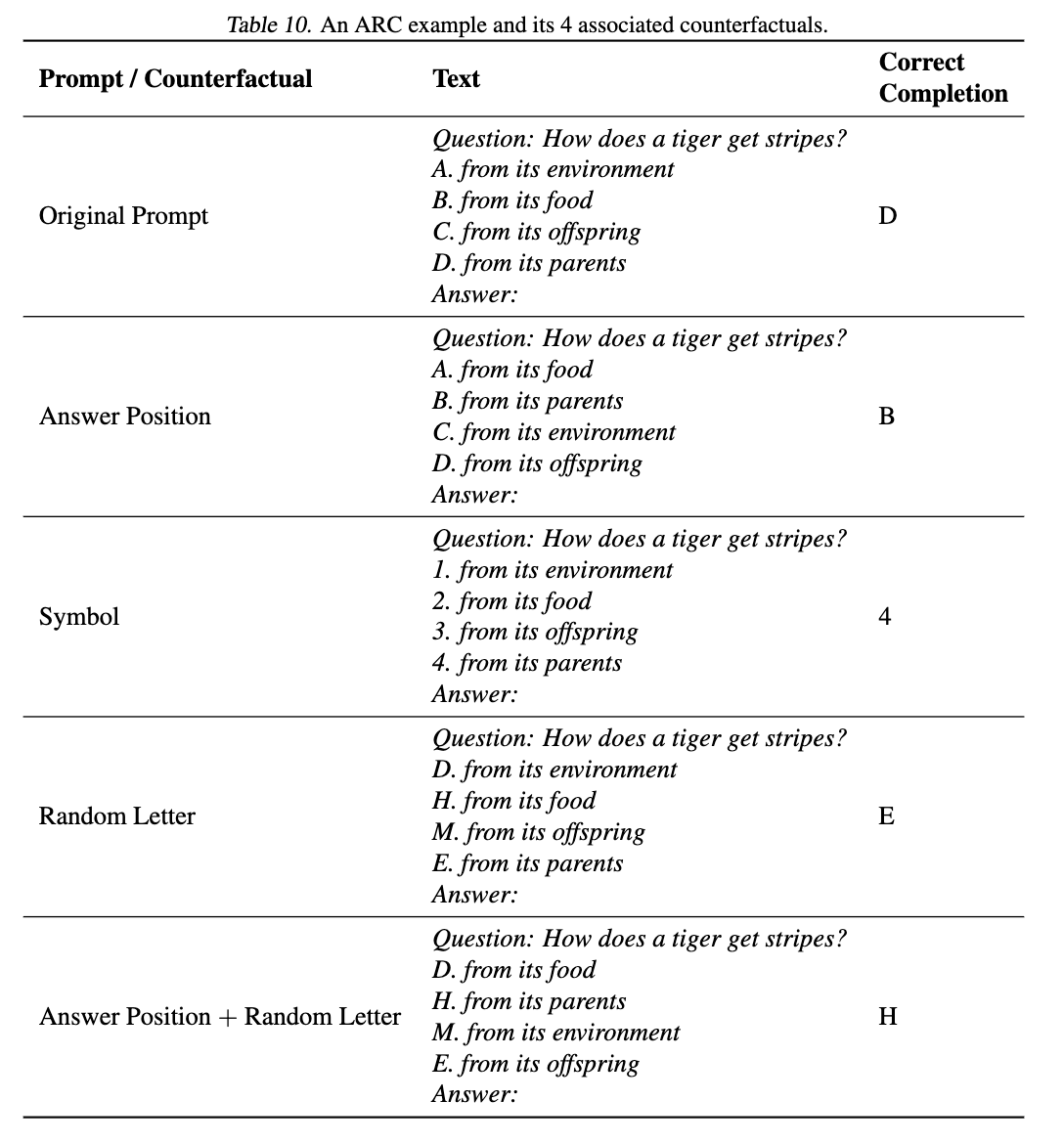

The dataset consists of three subsets, corresponding to three types of counterfactuals: answerPosition (only change the orders of choices), randomLetter (only change the choice letters) and answerPosition_randomLetter (change both choice orders and letters). Examples of these counterfactuals are shown in Figure 2.

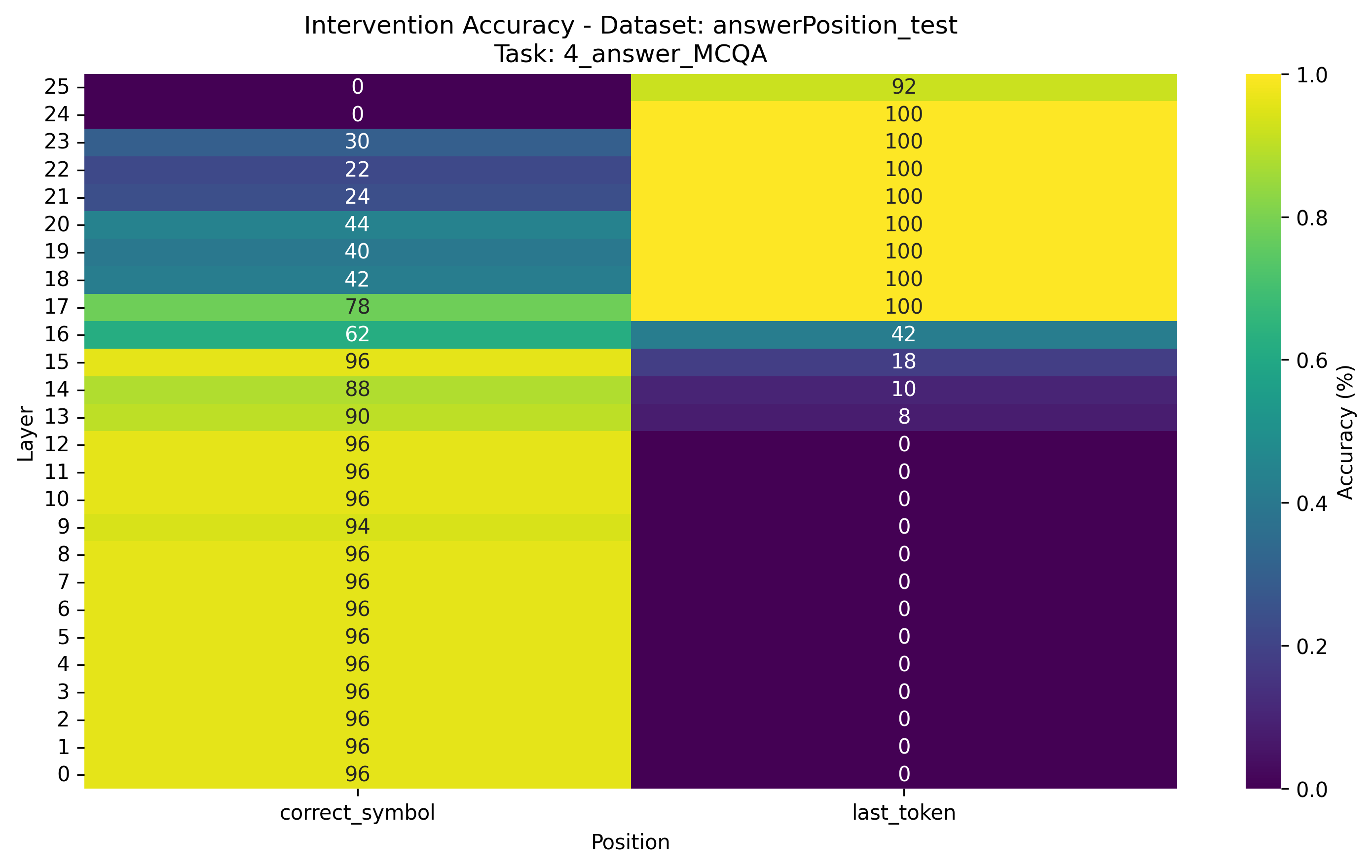

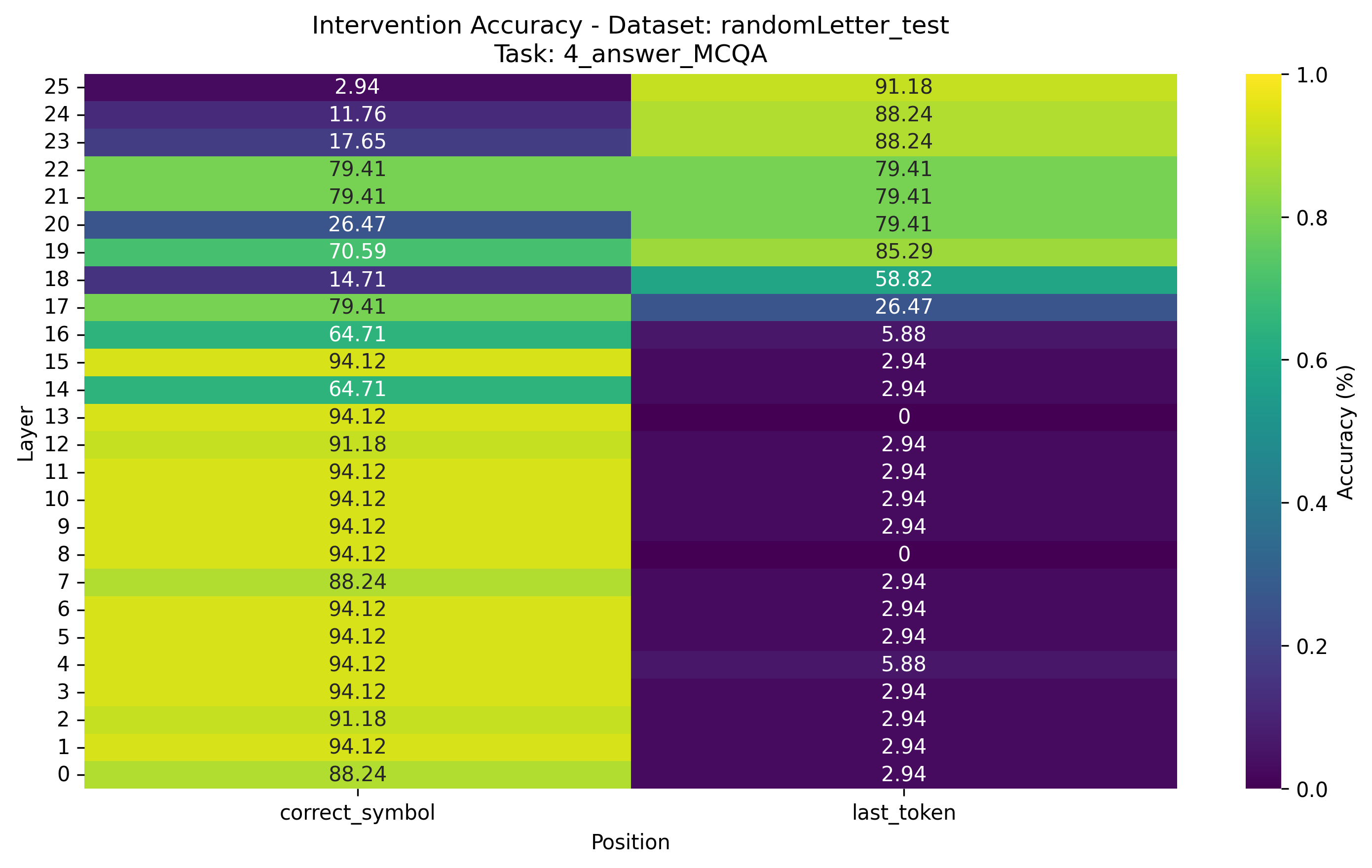

Intervention positions include the last token (last_token) and the choice letter of the correct answer (correct_symbol).

The metric is interchange intervention accuracy (IIA). We now formulate this metric according to

| Method | $O_\text{Answer}$ | $X_\text{Order}$ |

|---|---|---|

| CDAS | 89 (95) | 63 (77) |

| DAS$^*$ | 95 (97) | 77 (93) |

| DBM$^*$ | 84 (99) | 63 (84) |

| Full vector$^*$ | 61 (100) | 44 (77) |

| Method | $O_\text{Answer}$ | $X_\text{Order}$ |

|---|---|---|

| CDAS | 93 (97) | 42 (61) |

| DAS$^*$ | 88 (94) | 76 (88) |

| DBM$^*$ | 82 (99) | 63 (80) |

| Full vector$^*$ | 63 (100) | 43 (74) |

| Method | $X_\text{Carry}$ |

|---|---|

| CDAS | 27 (31) |

| DAS$^*$ | 31 (35) |

| DBM$^*$ | 32 (44) |

| Full vector$^*$ | 29 (35) |

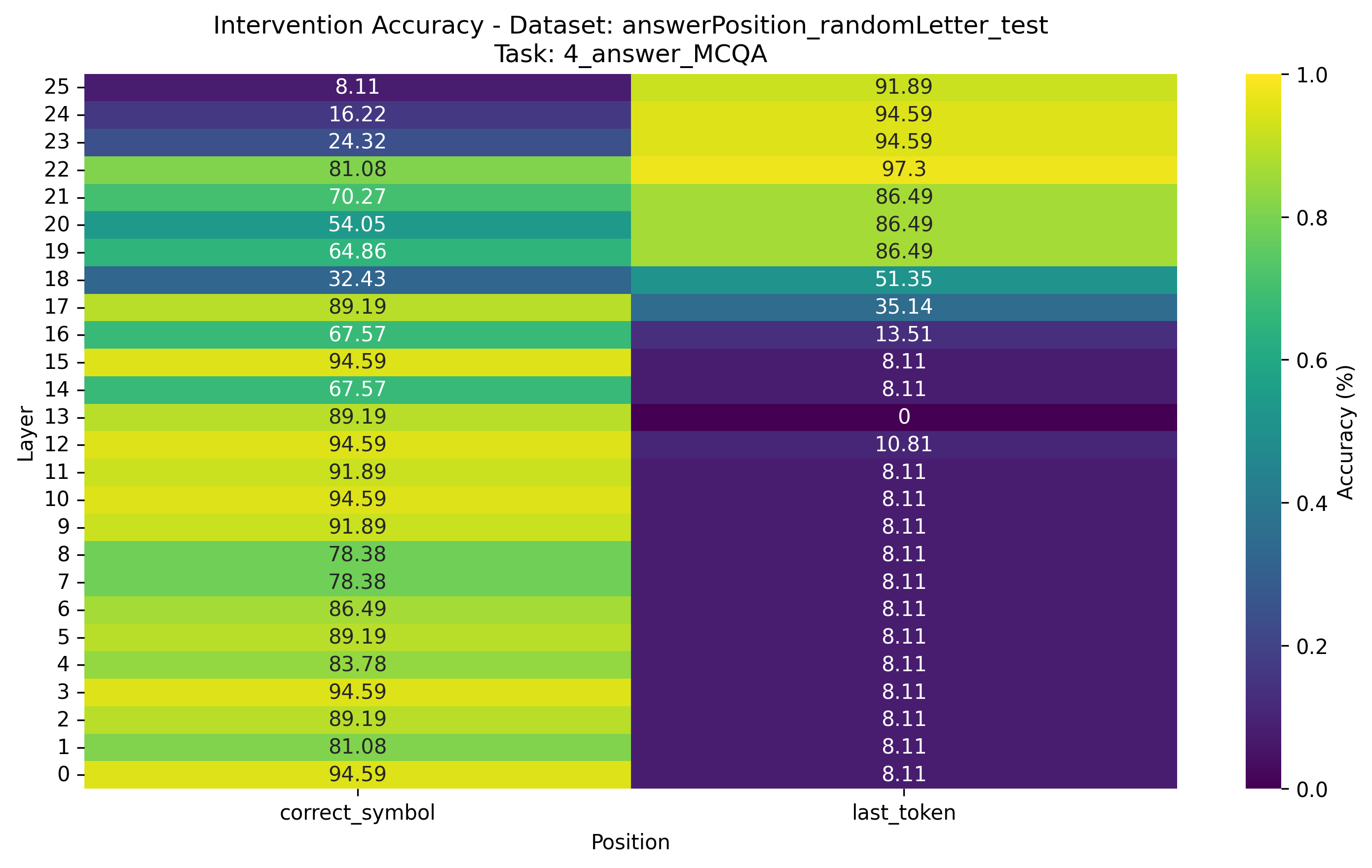

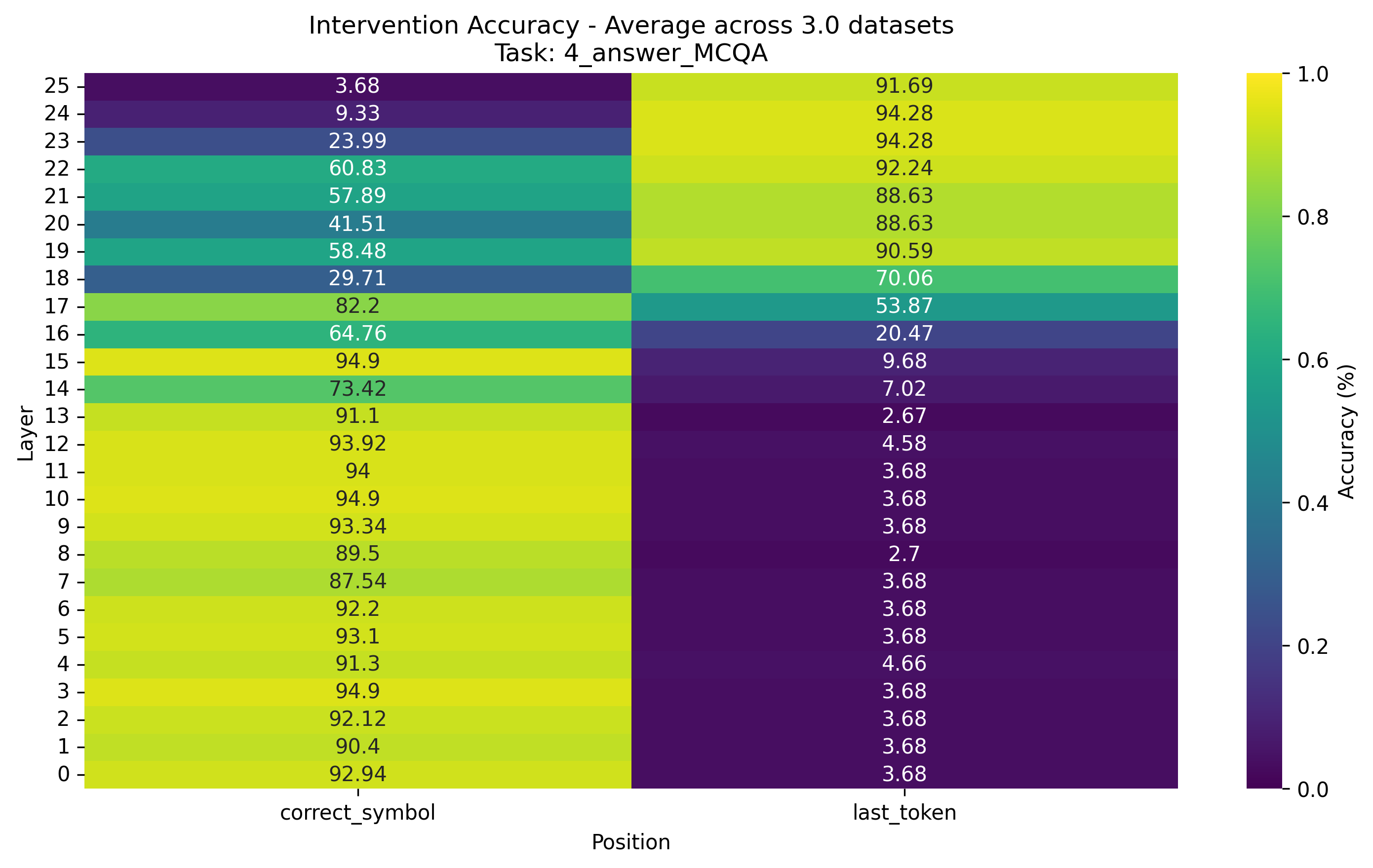

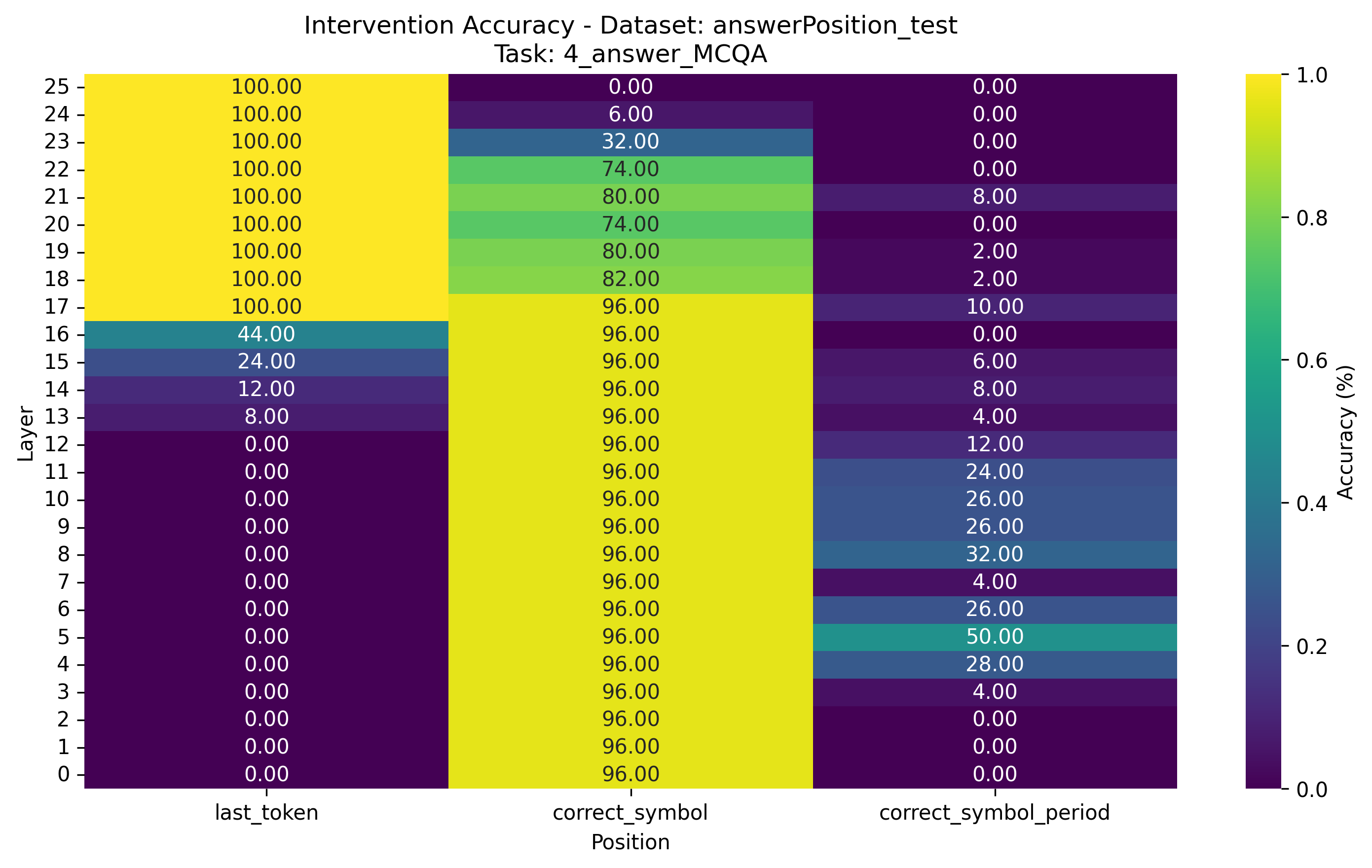

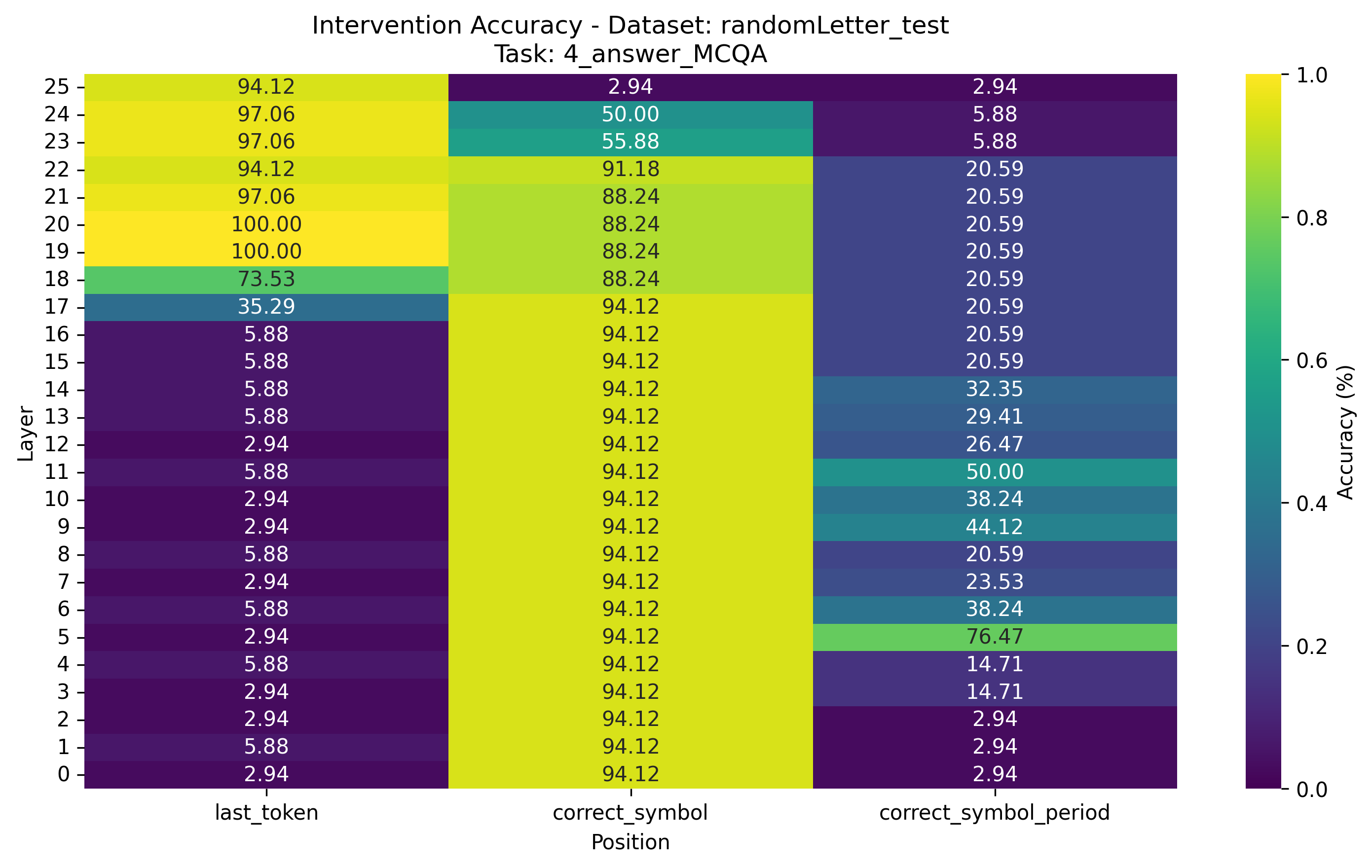

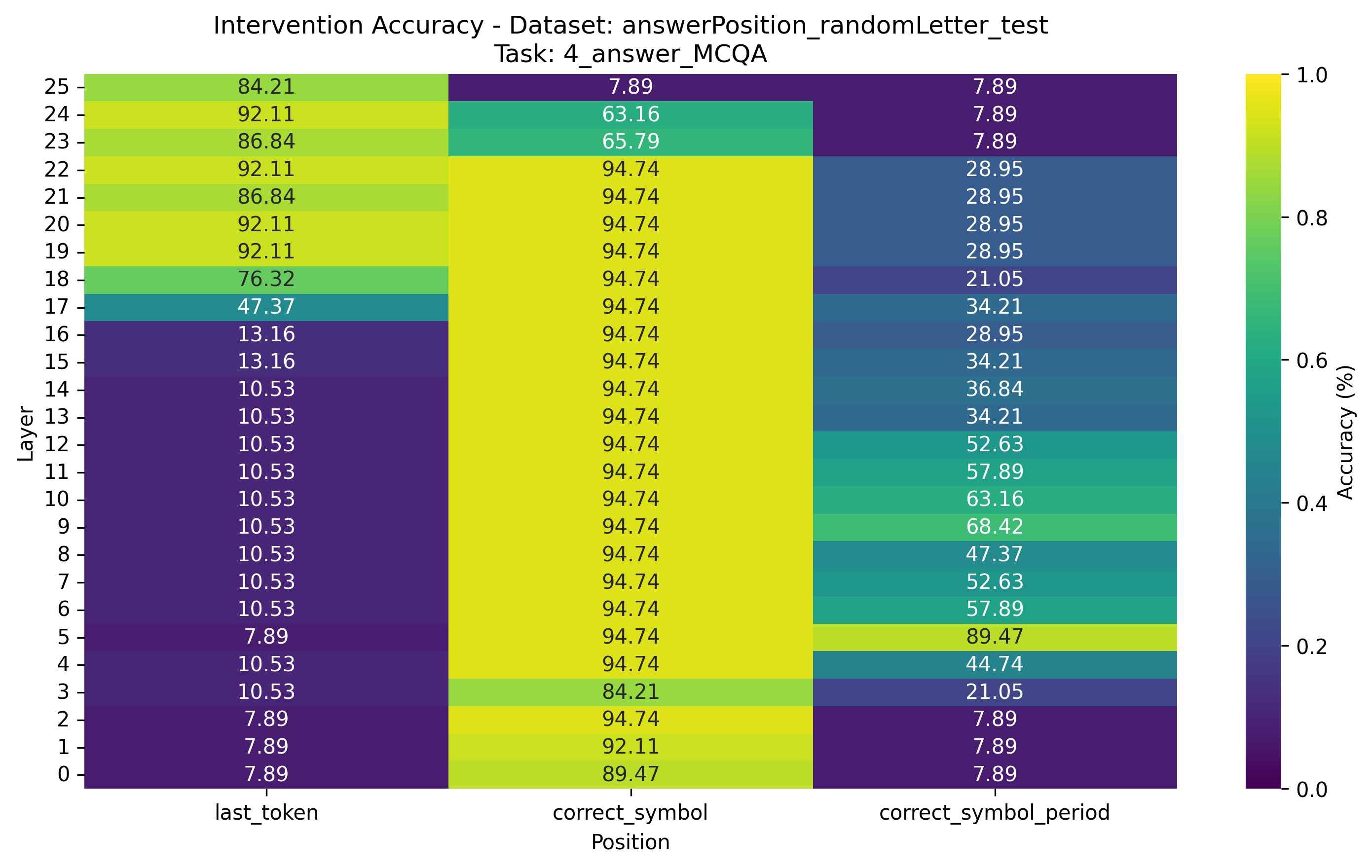

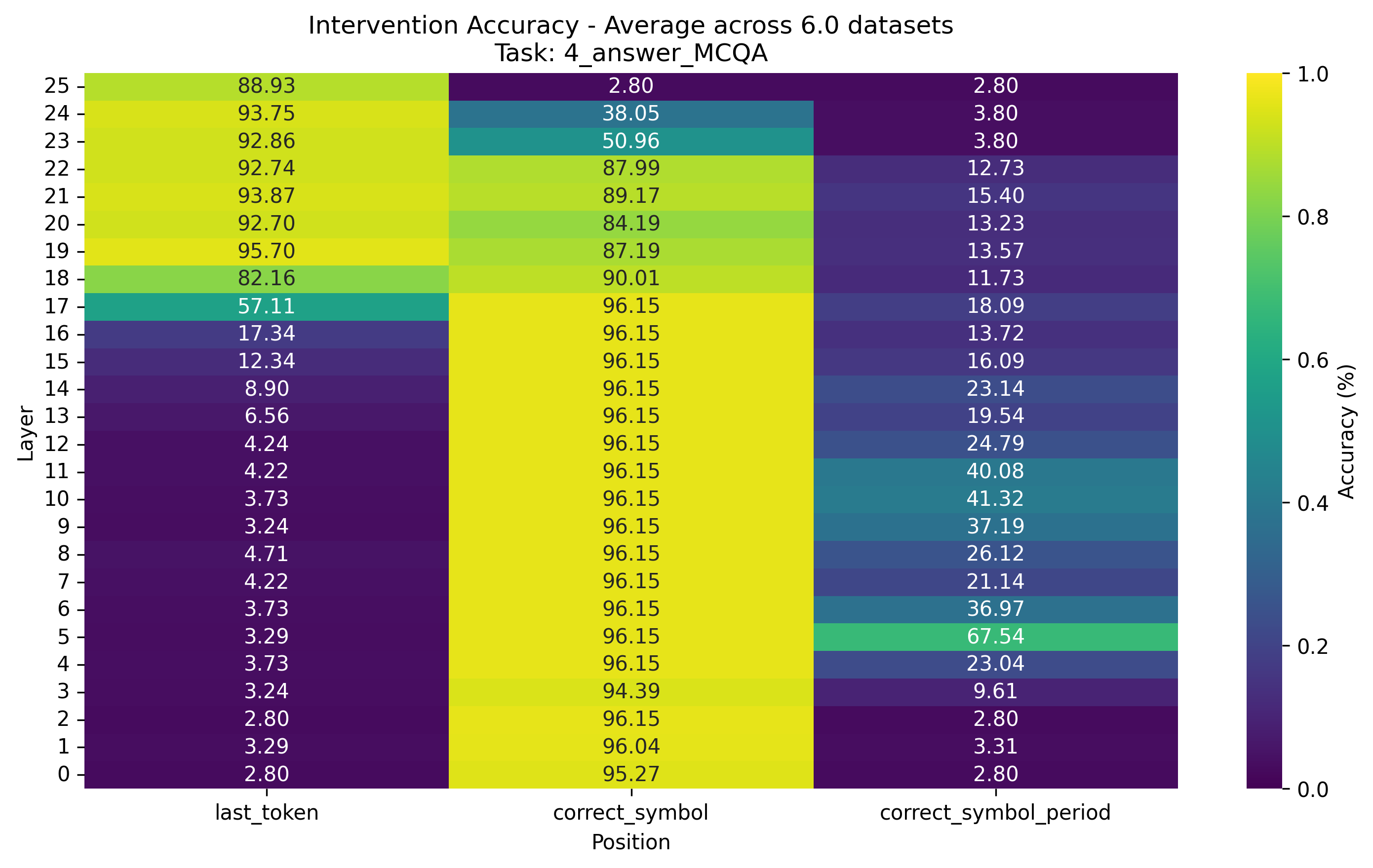

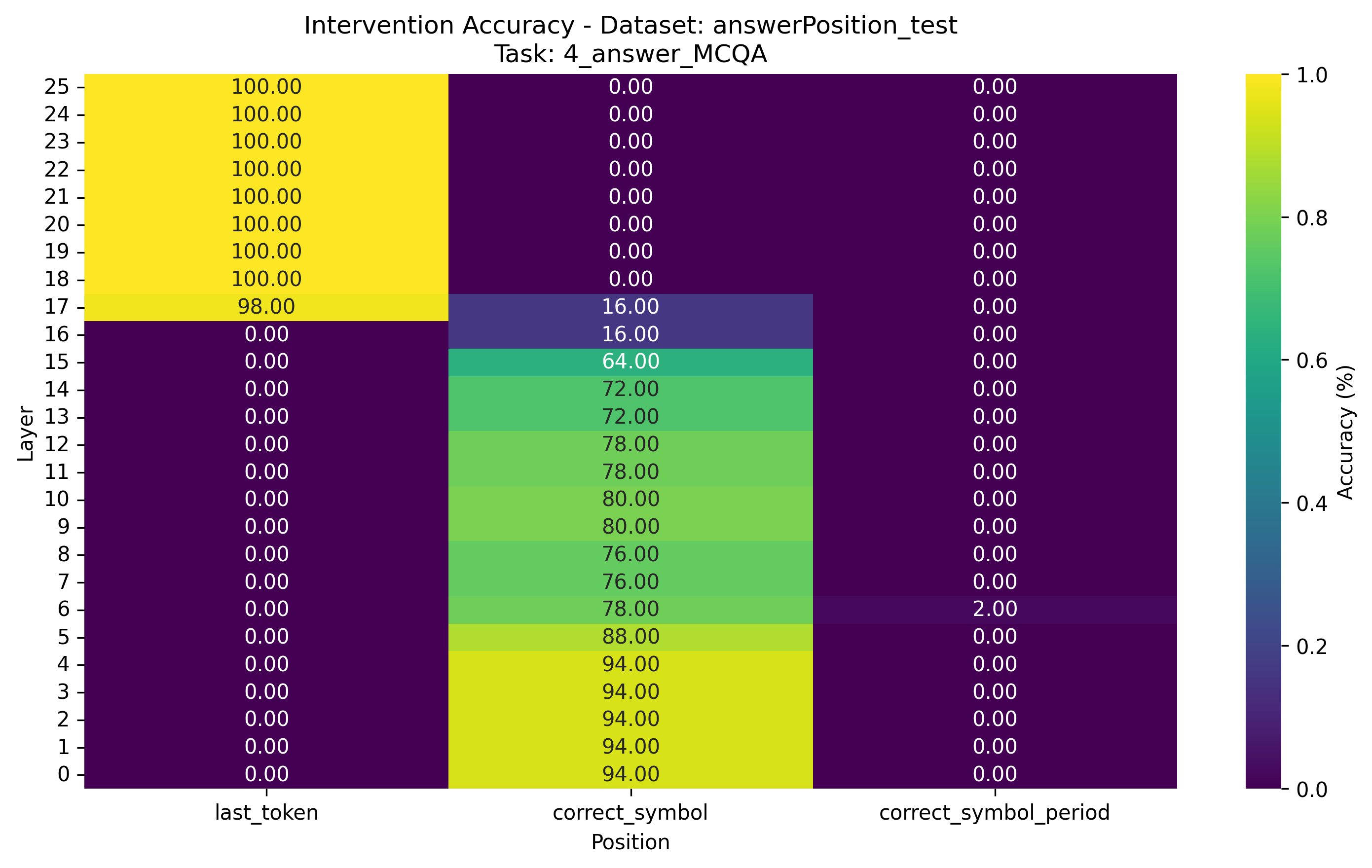

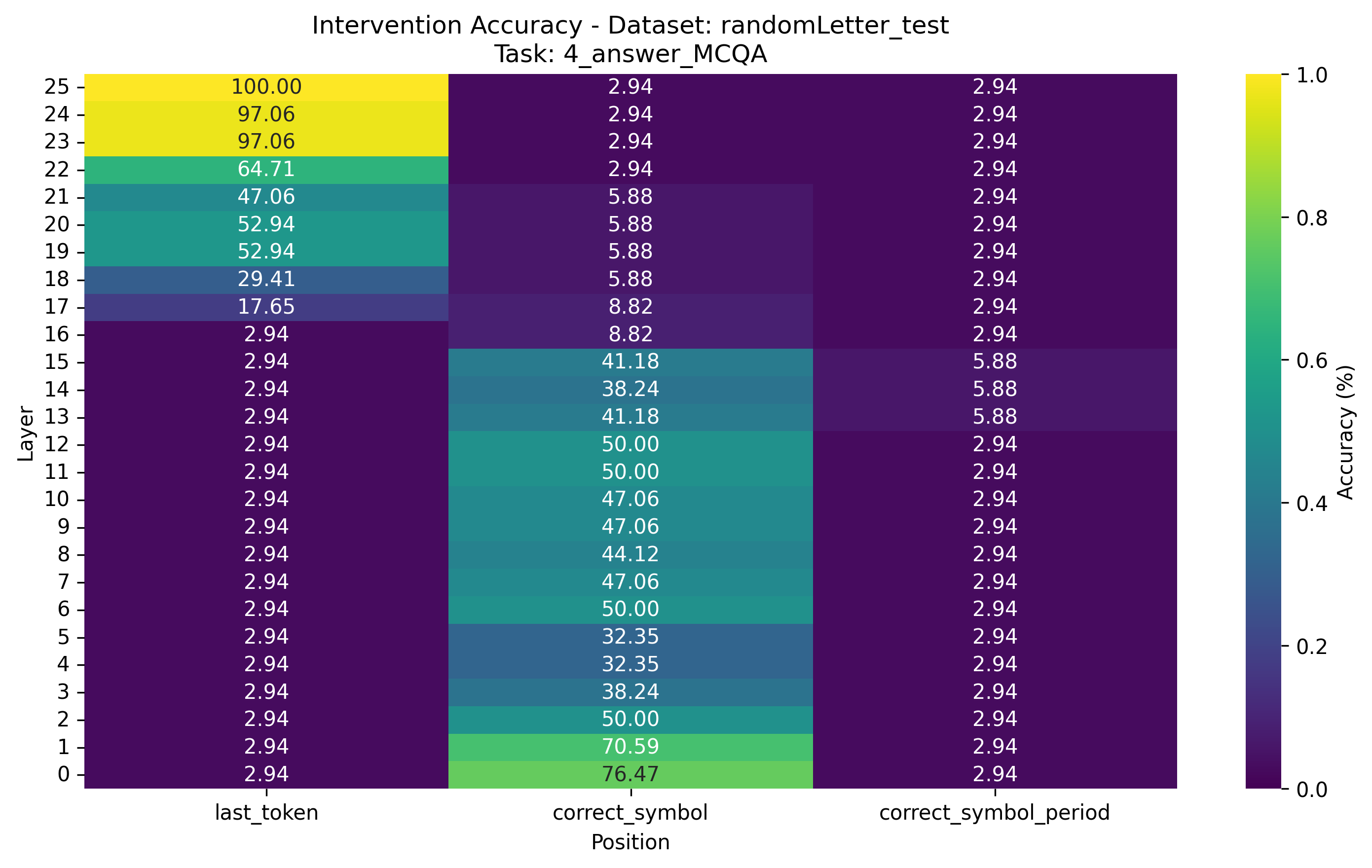

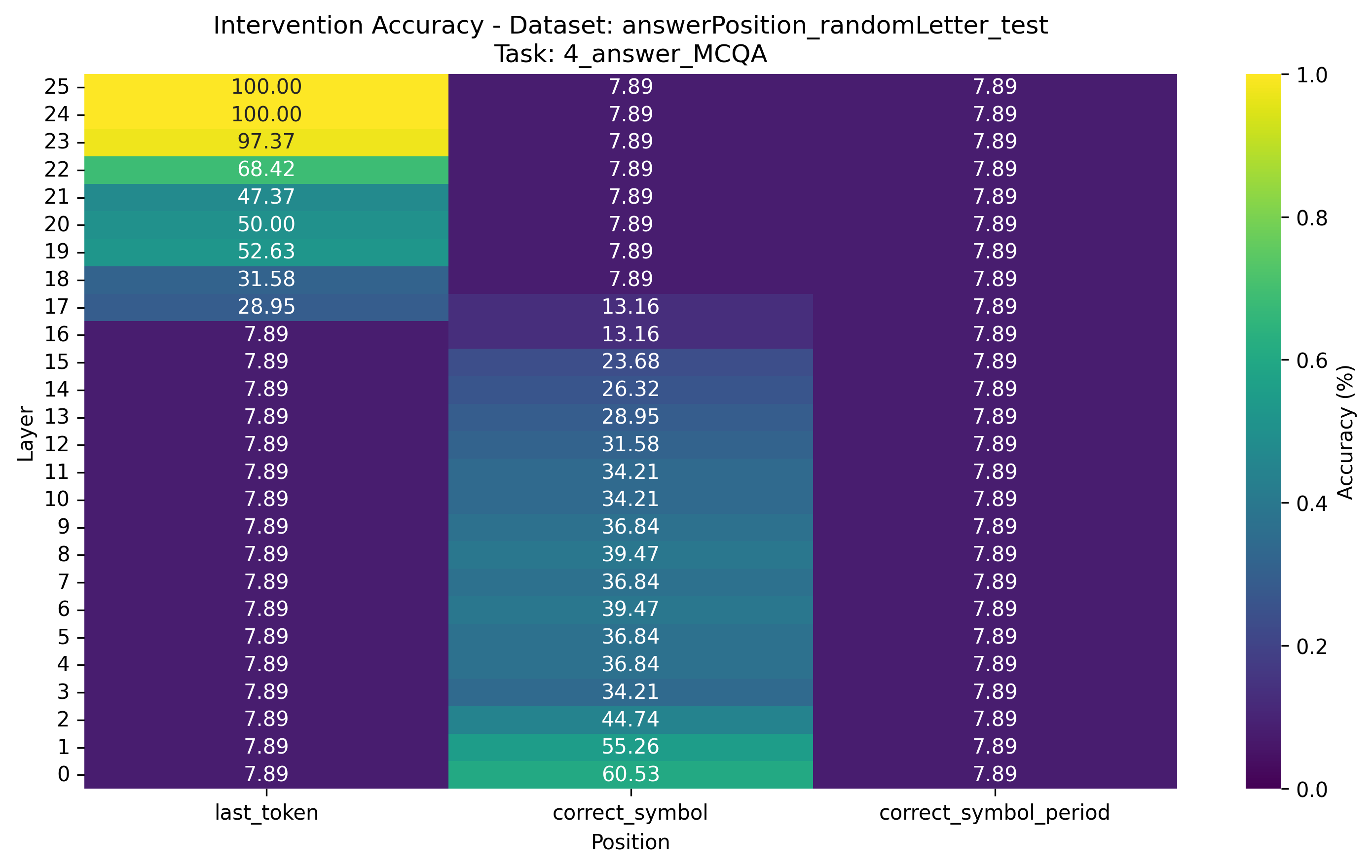

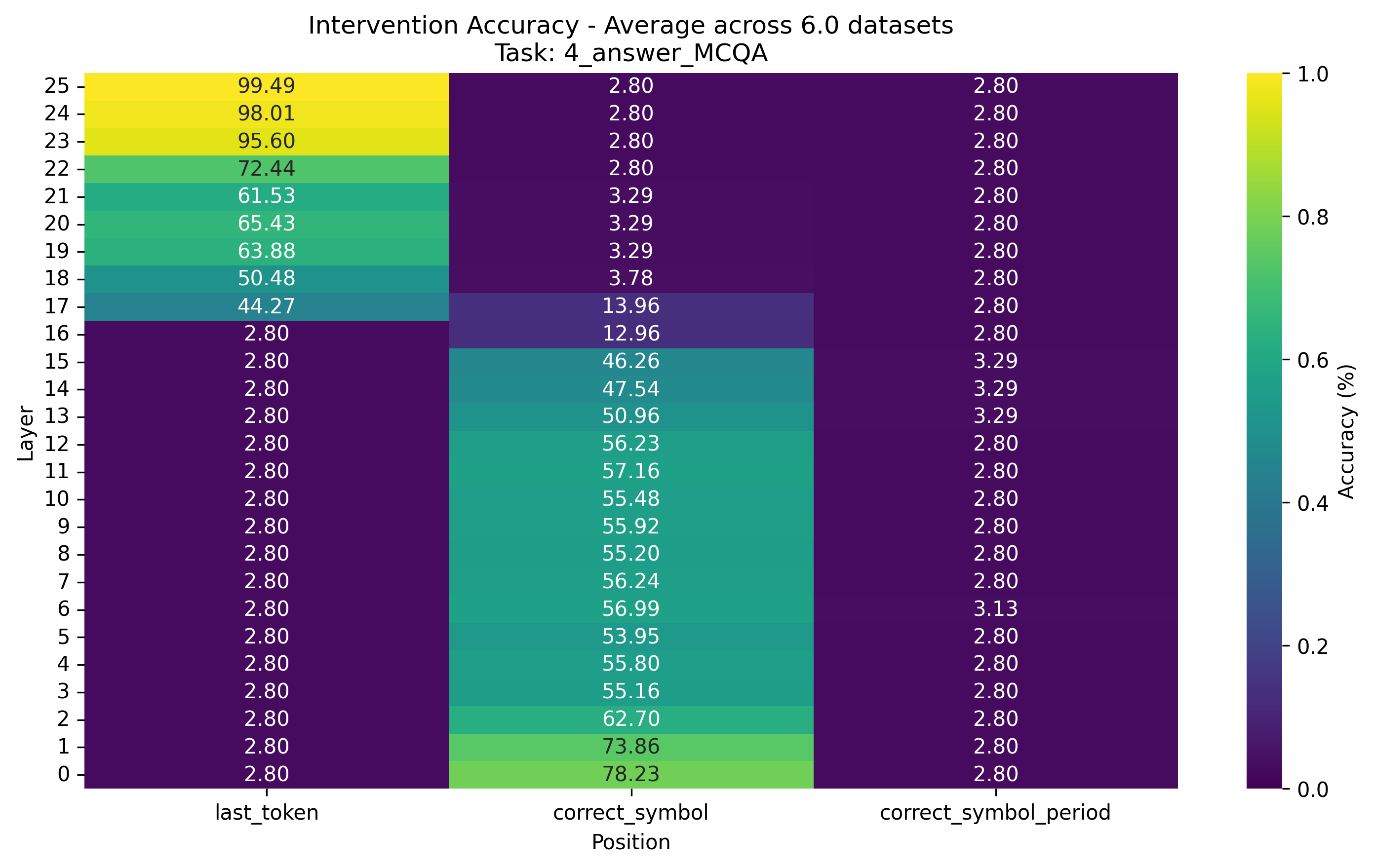

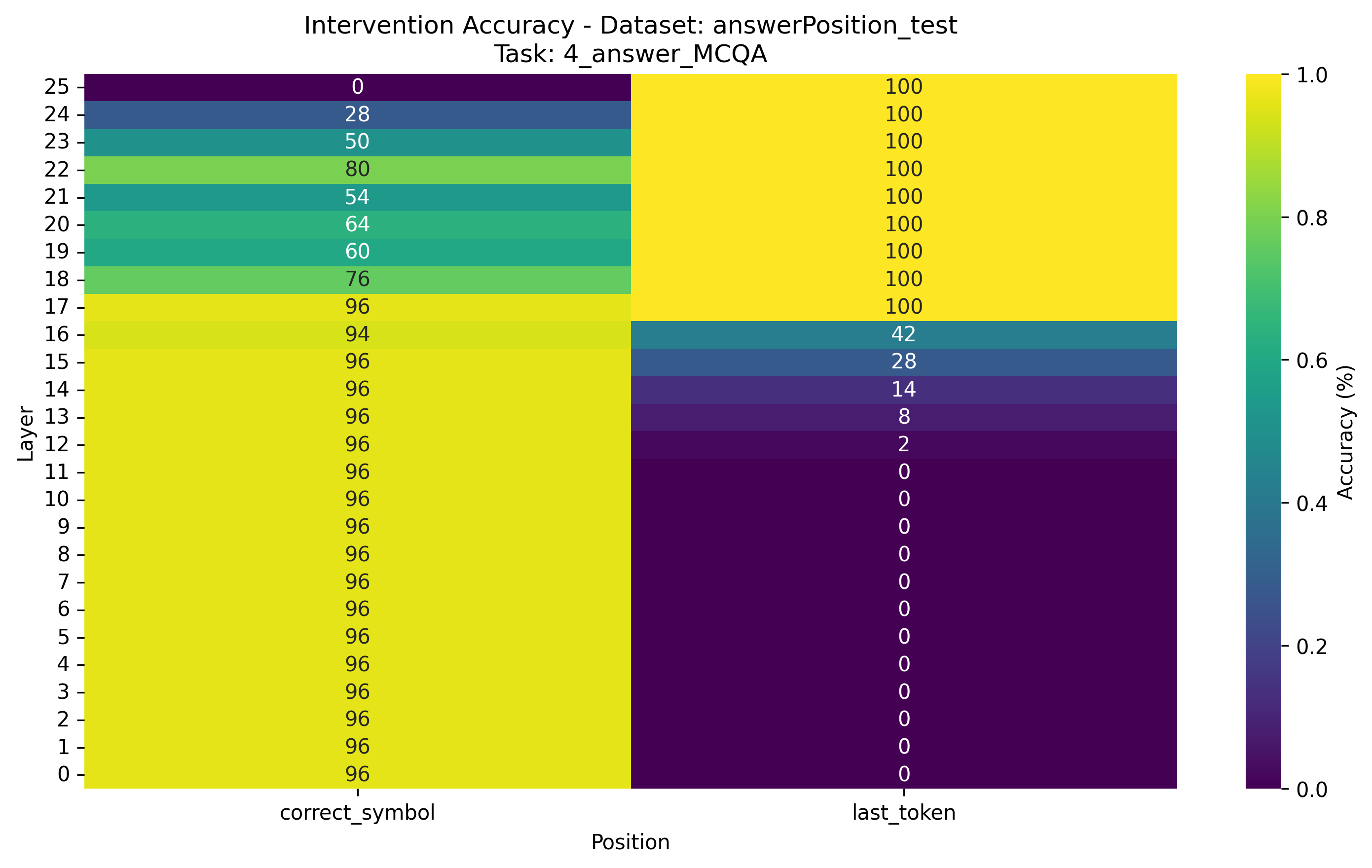

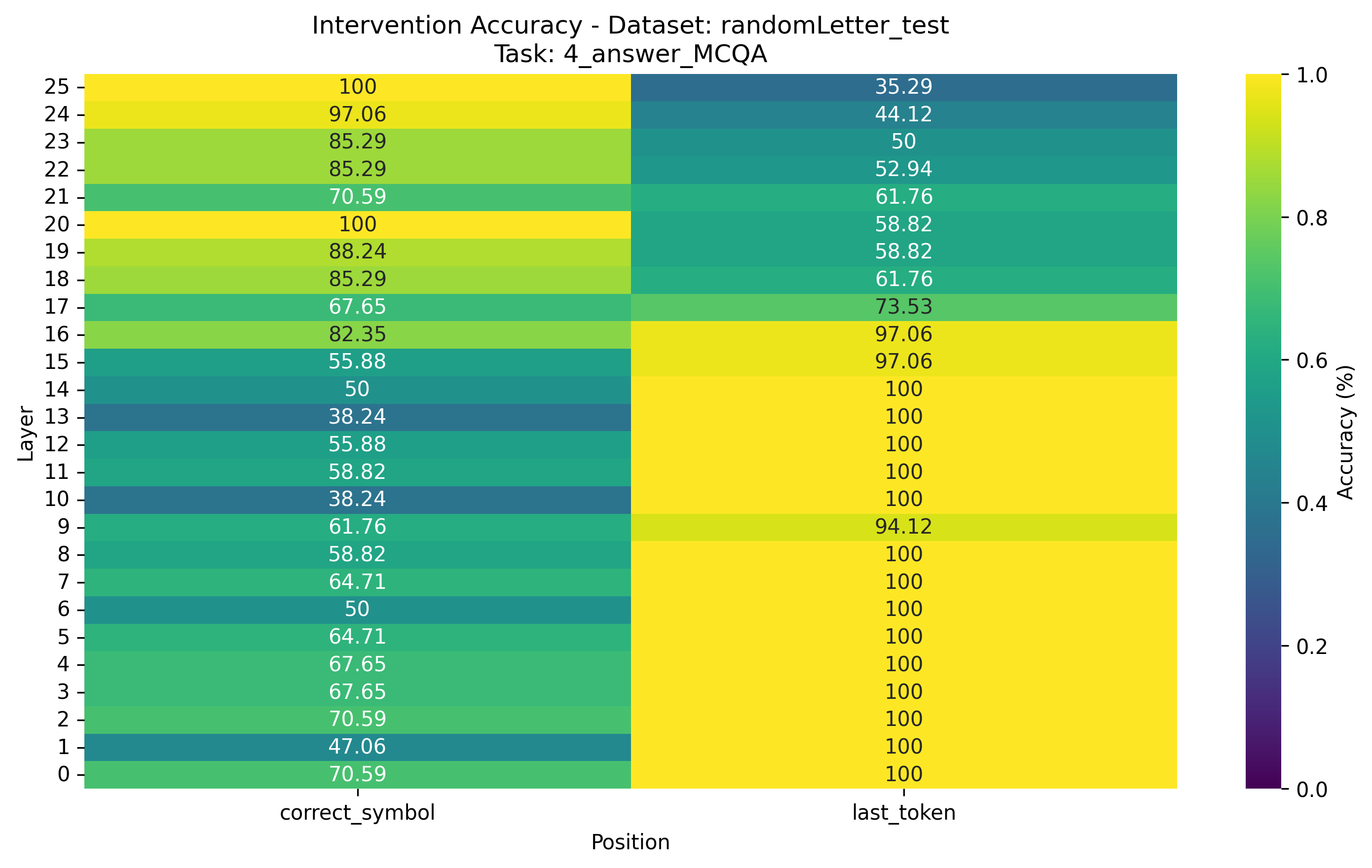

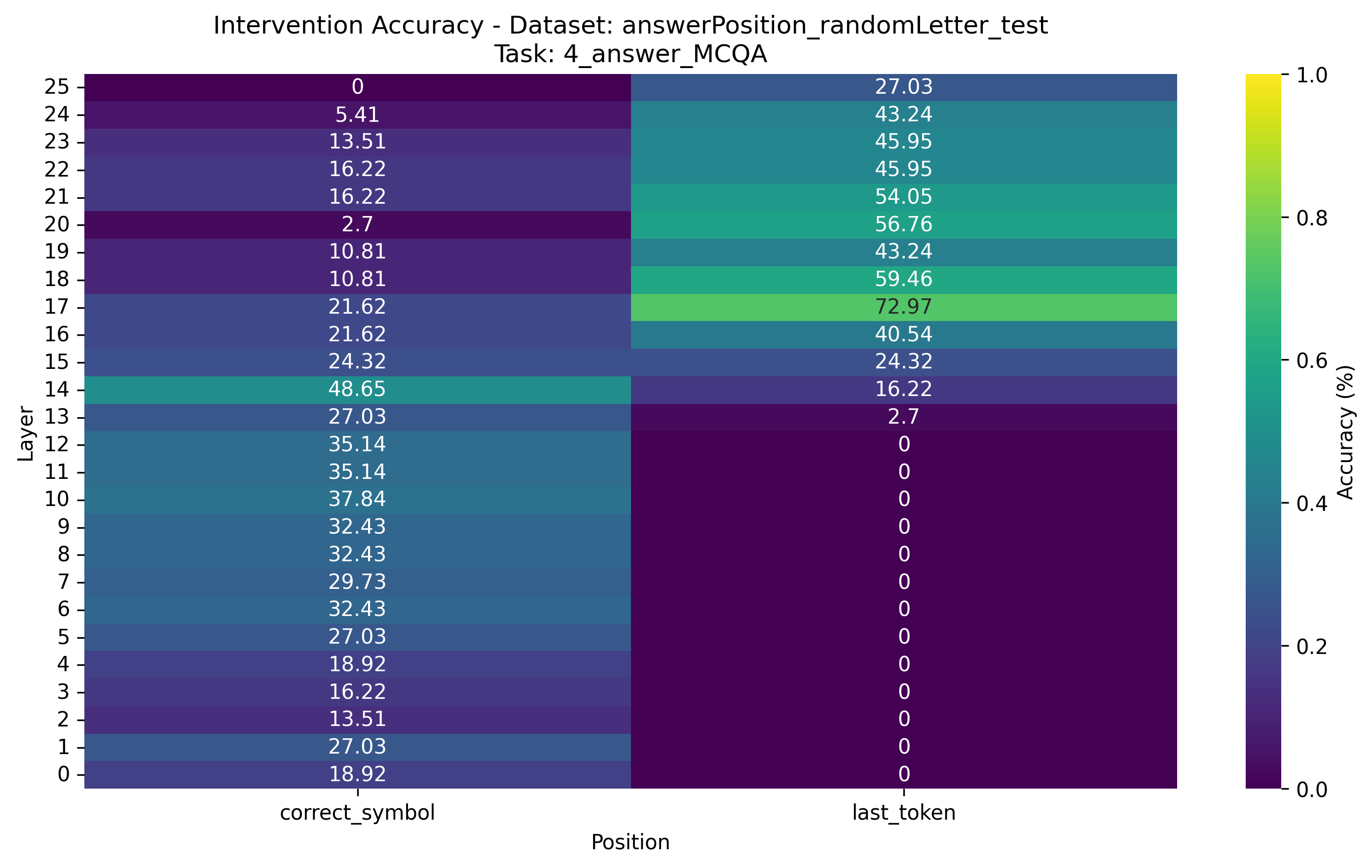

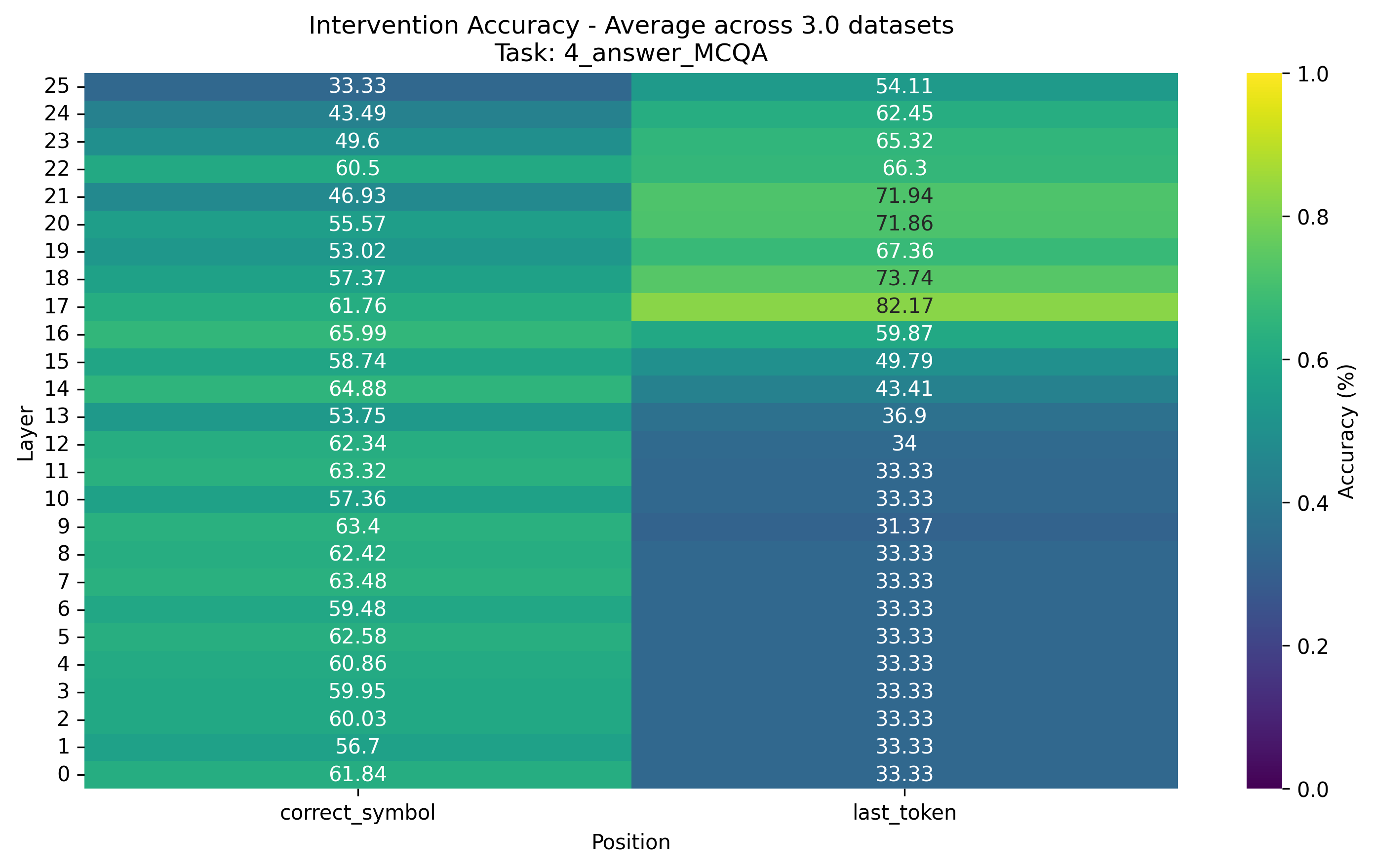

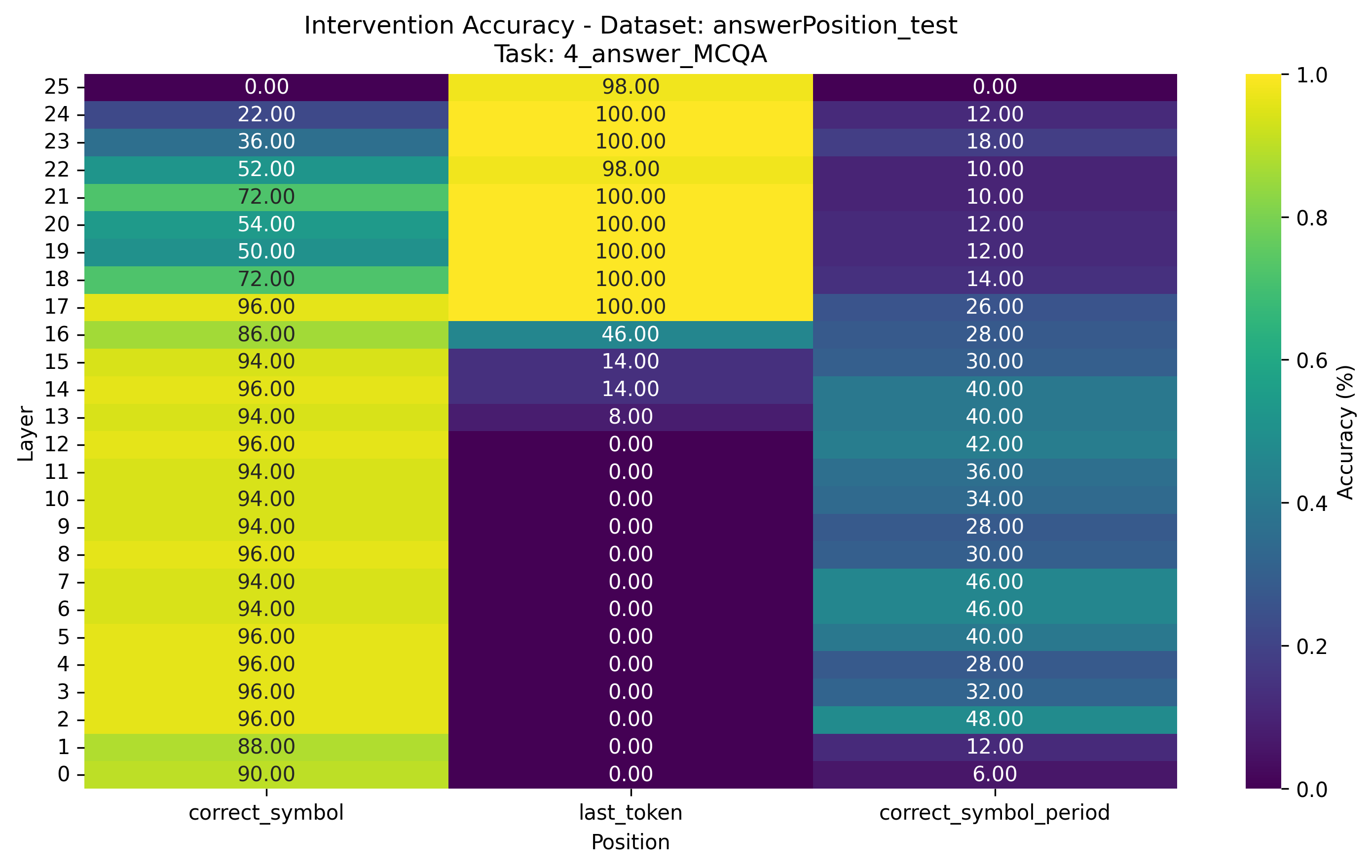

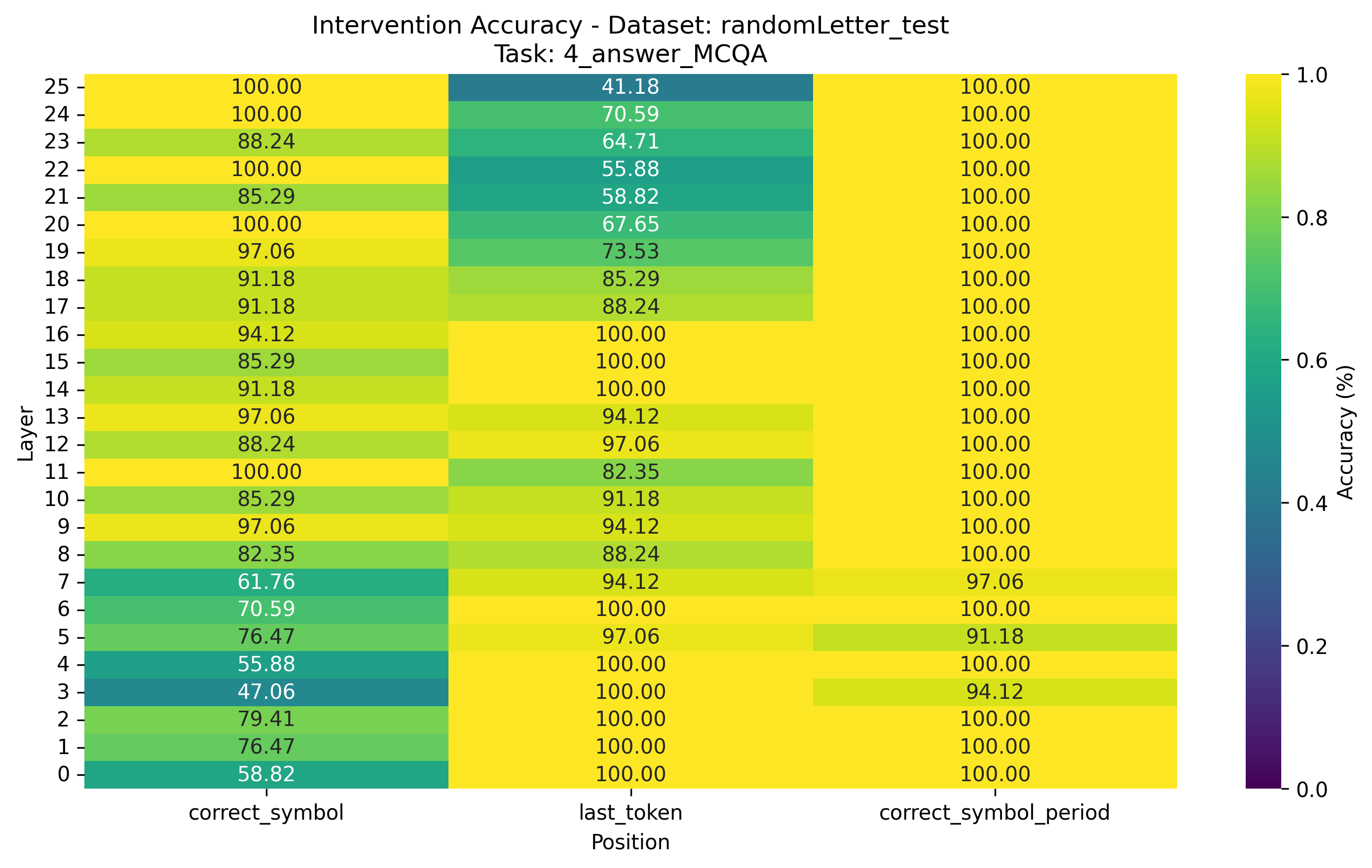

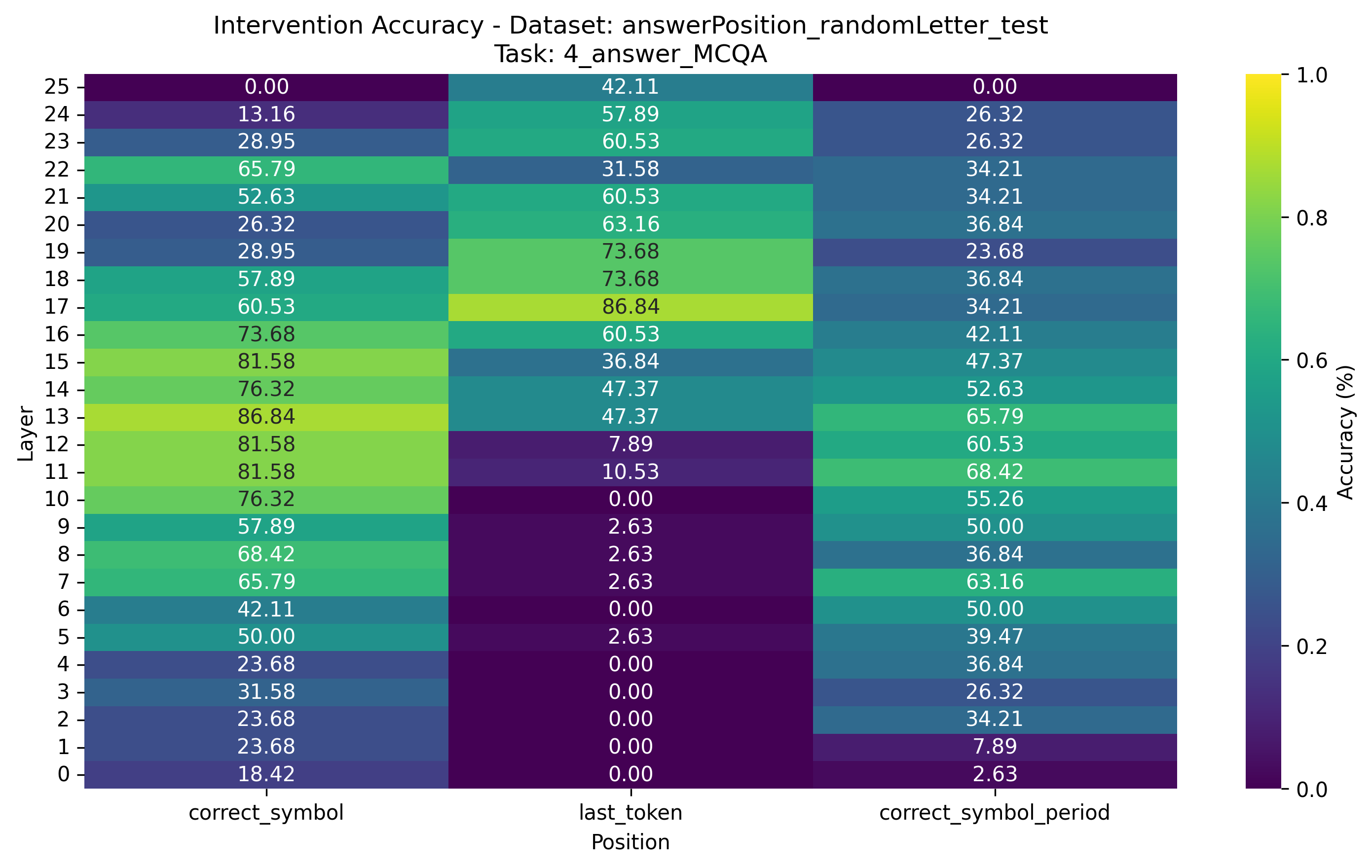

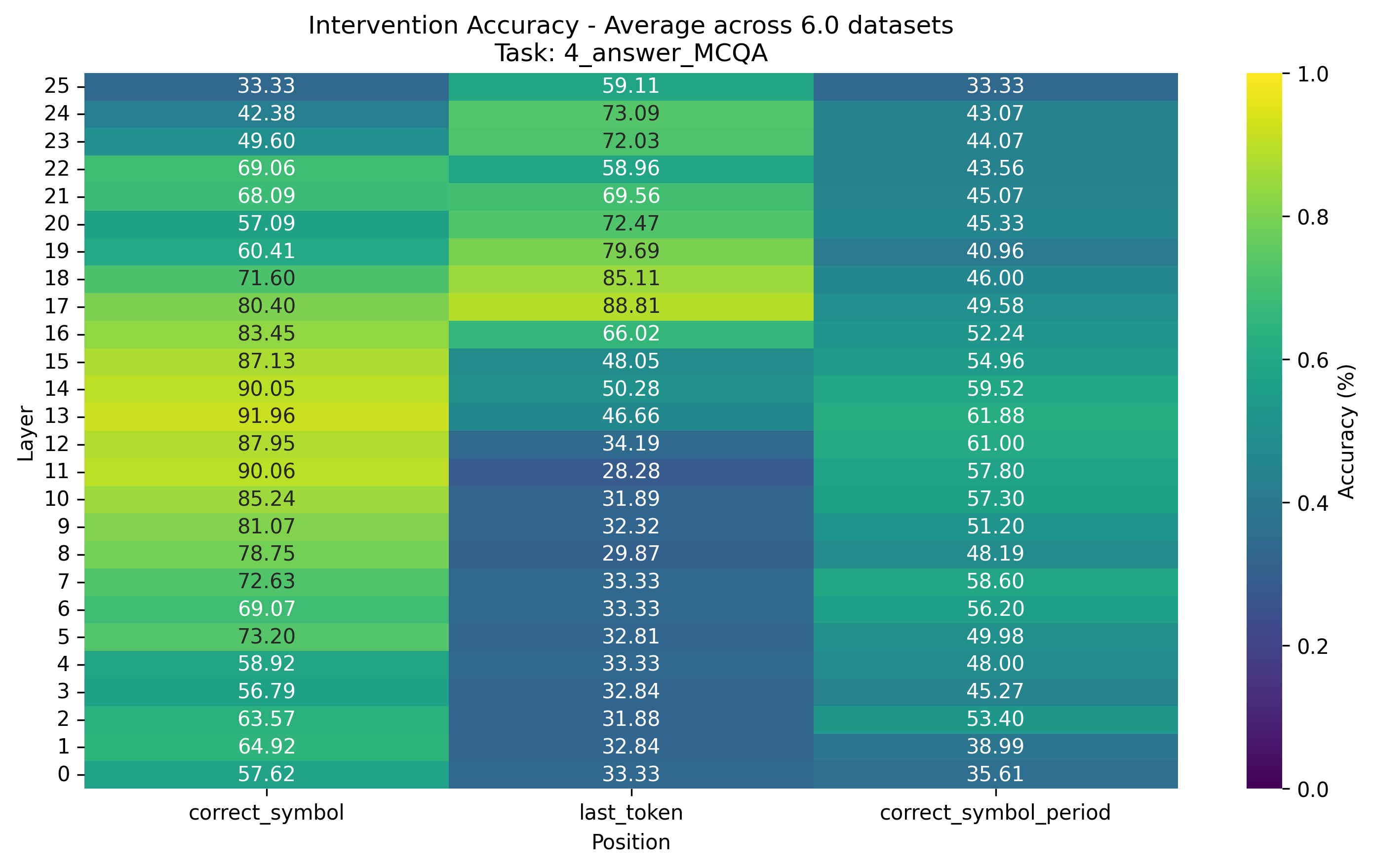

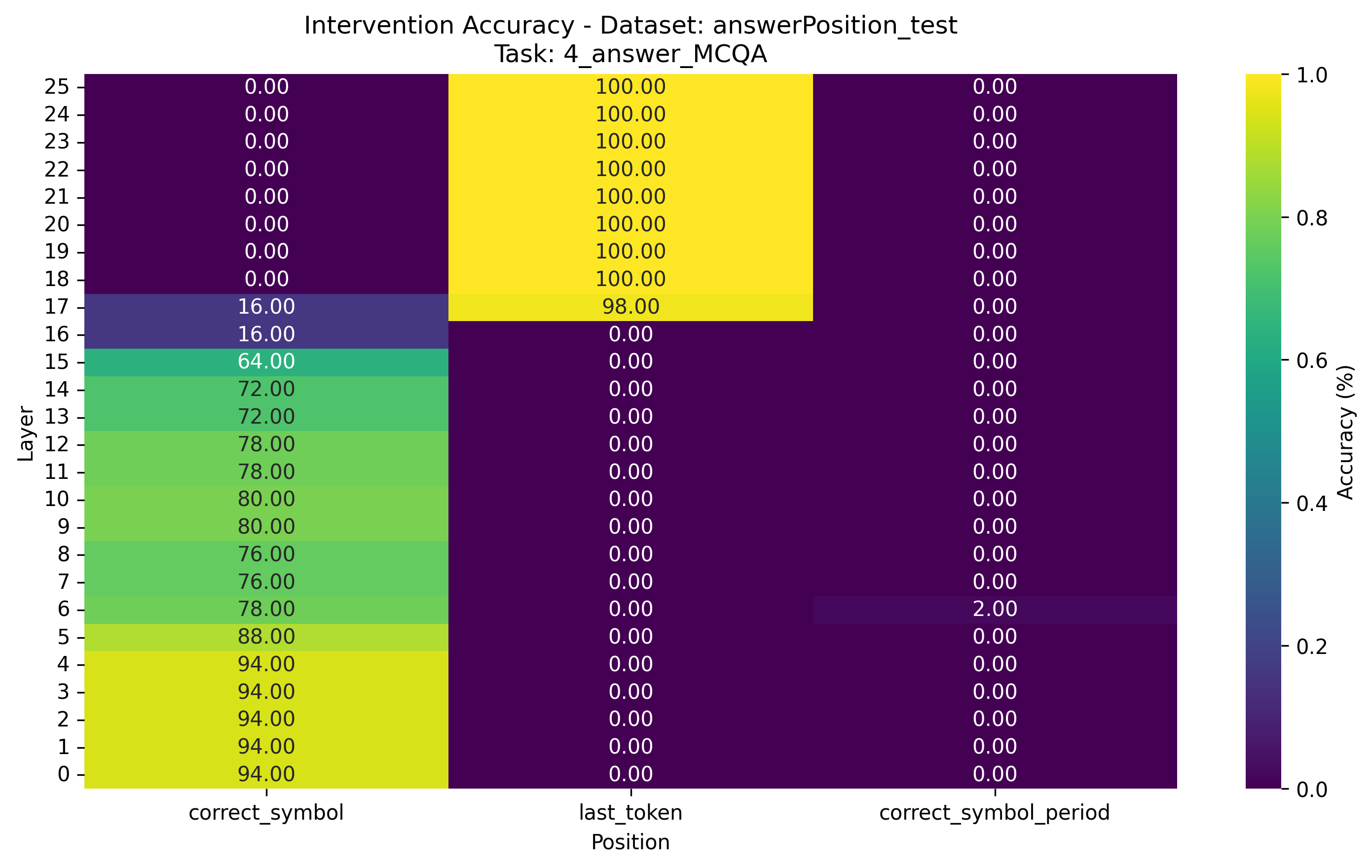

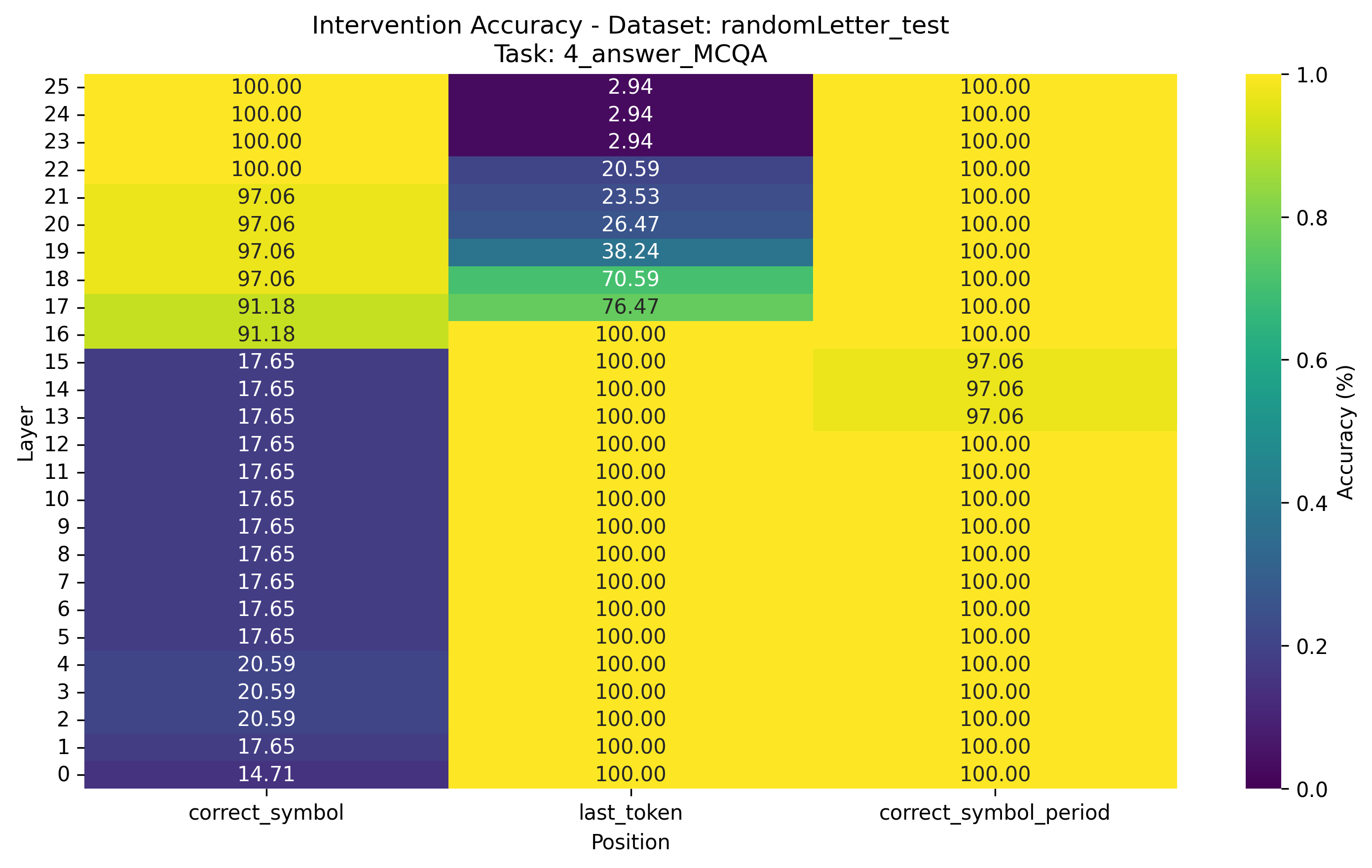

Results. Layer-wise CDAS results are shown in Figure 3 and Figure 6, while layer-wise DAS results are shown in Figure 4 and Figure 7 and layer-wise full-vector results are shown in Figure 5 and Figure 8. Comparing Figure 3 and 4, we can see that CDAS and DAS display qualitatively similar layer-wise performance for $O_\text{Answer}$. However, CDAS often yields low IIAs for $X_\text{Order}$ except for the answerPosition counterfactual.

Aggregate results are shown in Table 1, Table 2 and Table 3. IIA averaged across all layers tells us about the robustness of a causal variable localization, whereas the highest IAA of an individual layer yields the best IIA result obtained through layer-wise search. On the two multiple-choice tasks, the averaged CDAS performance with respect to $O_\text{Answer}$ is on par with DAS. However, its average performance with respect to $X_\text{Order}$ and $X_\text{Carry}$ is only comparable to the unsupervised full-vector baseline. The underperformance result indicates that CDAS fails to identify useful features for $X_\text{Order}$ and $X_\text{Carry}$. Both the positive and negative empirical results support our previous analysis that CDAS is not useful for internal

Takeaway. CDAS can only be used to align neural representations with high-level variables directly related to output content or properties of outputs, not the internal causal variables of high-level causal models.

Acknowledgement

This post was inspired by a conversation with Professor Yonatan Belinkov. His curiosity regarding CDAS’s performance on MIB helped clarify these limitations, and I’m grateful for the nudge to get these results out there.

Appendix

High-level causal model

If you found this useful, please cite this as:

Bao, Yuntai (Feb 2026). Concept Distributed Alignment Search for Faithful Representation Steering. colored-dye’s blog. https://colored-dye.github.io.

or as a BibTeX entry:

@misc{bao2026concept,

title = {Concept Distributed Alignment Search for Faithful Representation Steering},

author = {Bao, Yuntai},

note = {Blog post},

year = {2026},

month = {Feb},

url = {https://colored-dye.github.io/blog/2026/concept-das/}

}